Compare commits

318 Commits

v0.6.0-alp

...

v0.7.0-alp

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

be5f2ef9b9 | ||

|

|

adfcb79387 | ||

|

|

73c4c0ac5f | ||

|

|

6fc601f696 | ||

|

|

07111fb7bb | ||

|

|

a06d2170b0 | ||

|

|

7f53ea3bf3 | ||

|

|

b2accd1c2a | ||

|

|

8ef8a8dea7 | ||

|

|

e929404676 | ||

|

|

c2258bedae | ||

|

|

215fdbb7ed | ||

|

|

ee998f6882 | ||

|

|

826e95afca | ||

|

|

47583d48e7 | ||

|

|

e759cdf061 | ||

|

|

88503c2a09 | ||

|

|

d5be23dffe | ||

|

|

80c01dc085 | ||

|

|

45b2549fa9 | ||

|

|

c7ce454188 | ||

|

|

7059ea42d6 | ||

|

|

8ea1c29c9b | ||

|

|

33bbfdbc9b | ||

|

|

5de54f8853 | ||

|

|

a1ac41218a | ||

|

|

55fc647568 | ||

|

|

e83e898eed | ||

|

|

eb07e4588b | ||

|

|

563f834c96 | ||

|

|

183178681d | ||

|

|

8dba53e494 | ||

|

|

e4782b19a3 | ||

|

|

ec86b1dffa | ||

|

|

6cb8266c7b | ||

|

|

9c50302a39 | ||

|

|

3313c69898 | ||

|

|

530c6ca7ec | ||

|

|

07ed2fb523 | ||

|

|

d9ec380a15 | ||

|

|

b60eb3a899 | ||

|

|

b4df69791b | ||

|

|

c21b8a22b9 | ||

|

|

475a76e656 | ||

|

|

7ba5d5ef86 | ||

|

|

737dc1ddde | ||

|

|

164bf19b36 | ||

|

|

25976771d9 | ||

|

|

f2198c2e9a | ||

|

|

eec19c6d2c | ||

|

|

30e03feb5f | ||

|

|

58cd3bde9f | ||

|

|

662bfb7b88 | ||

|

|

5f3e3a17d3 | ||

|

|

feba2d9975 | ||

|

|

e3e3a1c457 | ||

|

|

90628f3c8d | ||

|

|

f6bcadb79d | ||

|

|

d4ac16773c | ||

|

|

96f044d2bf | ||

|

|

f31868b913 | ||

|

|

73b0ff5b55 | ||

|

|

64cf69045a | ||

|

|

e57dae0f31 | ||

|

|

6386e7d5cf | ||

|

|

4bad103da9 | ||

|

|

30a26adb7c | ||

|

|

8be4adfc0a | ||

|

|

fed4cc3965 | ||

|

|

7d1e074683 | ||

|

|

00516e50a1 | ||

|

|

e83d76fbd9 | ||

|

|

304f152315 | ||

|

|

3a82ebf7fd | ||

|

|

0253d34467 | ||

|

|

9209f9acde | ||

|

|

3dbbb398df | ||

|

|

17e8ad110f | ||

|

|

5e91d31ed3 | ||

|

|

fad9d20820 | ||

|

|

fe9a1c8580 | ||

|

|

cd6d7d5198 | ||

|

|

771478bc68 | ||

|

|

c4a59896f8 | ||

|

|

3eb1608403 | ||

|

|

8fde70d4dc | ||

|

|

5a047833ed | ||

|

|

f6c28e6be1 | ||

|

|

0ebf10d19d | ||

|

|

d3005d3ef3 | ||

|

|

effcef2184 | ||

|

|

89fc0ad7a9 | ||

|

|

410272ee1d | ||

|

|

1c97bf50b6 | ||

|

|

4ecd2c9d0b | ||

|

|

e592243a09 | ||

|

|

2f4a92e352 | ||

|

|

ceafc29040 | ||

|

|

b20efabfd2 | ||

|

|

85b6e7293c | ||

|

|

6aced927ad | ||

|

|

75997e6c08 | ||

|

|

9040d00110 | ||

|

|

8ebc5c6b07 | ||

|

|

d4807790ff | ||

|

|

0de5e7a285 | ||

|

|

c40000aeda | ||

|

|

31198bc105 | ||

|

|

92599acfca | ||

|

|

f6e70779fe | ||

|

|

3017bde686 | ||

|

|

9d84ec4bb3 | ||

|

|

586141adb2 | ||

|

|

3f763f99e2 | ||

|

|

15c7f36ea3 | ||

|

|

04d1a083fa | ||

|

|

327ee1dae8 | ||

|

|

22885c3e64 | ||

|

|

94ededb54c | ||

|

|

af6a07697a | ||

|

|

5f1d8c95eb | ||

|

|

7d9e032407 | ||

|

|

bc918a5ad5 | ||

|

|

ee54ce4727 | ||

|

|

e85bf2f2d5 | ||

|

|

a7460ffbd1 | ||

|

|

7fe1fd2f95 | ||

|

|

d30670e92e | ||

|

|

9b202c6e1e | ||

|

|

87946eafd5 | ||

|

|

7575d3c726 | ||

|

|

8b9713a934 | ||

|

|

ec713c18c4 | ||

|

|

c24b0a1a3f | ||

|

|

34e0cb0092 | ||

|

|

7b7c7cba21 | ||

|

|

c45343dd30 | ||

|

|

b7f6603c1f | ||

|

|

2d3b052dea | ||

|

|

dcb6234771 | ||

|

|

e44d423e83 | ||

|

|

5435bb734c | ||

|

|

13f59adf61 | ||

|

|

0fce3368d3 | ||

|

|

1ee5c81267 | ||

|

|

3bb9d5eb50 | ||

|

|

efb23f7cf9 | ||

|

|

013f4674de | ||

|

|

6966b25d9c | ||

|

|

d513f56c8c | ||

|

|

7aa05618a3 | ||

|

|

cdfbbe5e60 | ||

|

|

fe7d1cb81c | ||

|

|

c2a9395a4b | ||

|

|

586279bcfc | ||

|

|

8bd10e7c4c | ||

|

|

928e6165bc | ||

|

|

77c9e801aa | ||

|

|

c78132417f | ||

|

|

849928887e | ||

|

|

ba1163d49f | ||

|

|

6f9c89af39 | ||

|

|

246b8b1242 | ||

|

|

f0db68cb75 | ||

|

|

f0d1fdfb46 | ||

|

|

3b8b2e030a | ||

|

|

b4fee677a5 | ||

|

|

fe706583f9 | ||

|

|

d0e0c17ece | ||

|

|

5aaa38bcaf | ||

|

|

6ff9b27f8e | ||

|

|

3f4e035506 | ||

|

|

57d9fbb927 | ||

|

|

ee44e51b30 | ||

|

|

5011f24123 | ||

|

|

d1eda334f3 | ||

|

|

2ae5ce9f2c | ||

|

|

4f5ac78b7e | ||

|

|

074c9af020 | ||

|

|

2da2d4e365 | ||

|

|

8eb76ab2a5 | ||

|

|

a710d95243 | ||

|

|

a06535d7ed | ||

|

|

f511ac9be7 | ||

|

|

e28ad2177e | ||

|

|

cb16fe84cd | ||

|

|

ec3569aa39 | ||

|

|

246edecf53 | ||

|

|

34834c5af9 | ||

|

|

b845245614 | ||

|

|

5711fb9969 | ||

|

|

d1eaecde9a | ||

|

|

00c8505d1e | ||

|

|

33f01efe69 | ||

|

|

377d312c81 | ||

|

|

badf5d5412 | ||

|

|

0339f90b40 | ||

|

|

5455e8e6a9 | ||

|

|

6843b71a0d | ||

|

|

634408b5e8 | ||

|

|

d053f78b74 | ||

|

|

93b6fceb2f | ||

|

|

ac7860c35d | ||

|

|

b0eab8729f | ||

|

|

cb81f80b31 | ||

|

|

ea97529185 | ||

|

|

f1075191fe | ||

|

|

74c479fbc9 | ||

|

|

7e788d3a17 | ||

|

|

69b3c75f0d | ||

|

|

b2c2fa40a2 | ||

|

|

50458d9524 | ||

|

|

9679e3e356 | ||

|

|

6db9f92b8a | ||

|

|

4a44498d45 | ||

|

|

216510c573 | ||

|

|

fd338c3097 | ||

|

|

b66ebf5dec | ||

|

|

5da99de579 | ||

|

|

3aa2907bd6 | ||

|

|

05d1618659 | ||

|

|

86113811f2 | ||

|

|

53ecaa03f1 | ||

|

|

205c1aa505 | ||

|

|

9b54c1542b | ||

|

|

93d5d1b2ad | ||

|

|

4c0f3ed6f3 | ||

|

|

2580155bf2 | ||

|

|

6ab0dd4df9 | ||

|

|

4b8c36b6b9 | ||

|

|

359a8397c0 | ||

|

|

c9fd5d74b5 | ||

|

|

391744af97 | ||

|

|

587ab29e09 | ||

|

|

80f07dadc5 | ||

|

|

60609a44ba | ||

|

|

30c8fa46b4 | ||

|

|

7aab7d2f82 | ||

|

|

a8e1c44663 | ||

|

|

a2b92c35e1 | ||

|

|

9f2086c772 | ||

|

|

3eb005d492 | ||

|

|

68955bfcf4 | ||

|

|

9ac7070e08 | ||

|

|

e44e81bd17 | ||

|

|

f5eedd2d19 | ||

|

|

46059a37eb | ||

|

|

adc655a3a2 | ||

|

|

3058f80489 | ||

|

|

df98cae4b6 | ||

|

|

d327e0aabd | ||

|

|

17d3a6763c | ||

|

|

02c5b0343b | ||

|

|

2888e45fea | ||

|

|

f1311075d9 | ||

|

|

6c380e04a3 | ||

|

|

cef1c208a5 | ||

|

|

ef8eac92e3 | ||

|

|

9c9c63572b | ||

|

|

6c0c6de1d0 | ||

|

|

b57aecc24c | ||

|

|

290dde60a0 | ||

|

|

38623785f9 | ||

|

|

256ecc7208 | ||

|

|

76b06b47ba | ||

|

|

cf15cf587f | ||

|

|

134c7add57 | ||

|

|

ac0791826a | ||

|

|

d2622b7798 | ||

|

|

f82cbf3a27 | ||

|

|

aa7e3df8d6 | ||

|

|

ad00d7bd9c | ||

|

|

8d1f82c34d | ||

|

|

0cb2036e3a | ||

|

|

2b1e90b0a5 | ||

|

|

f2ccc133a2 | ||

|

|

5e824b39dd | ||

|

|

41efcae64b | ||

|

|

cf5671d058 | ||

|

|

2570bba6b1 | ||

|

|

71cb7d5c97 | ||

|

|

0df6541d5e | ||

|

|

52145caf7e | ||

|

|

86a50ae9e1 | ||

|

|

c64cfb74f3 | ||

|

|

26153d9919 | ||

|

|

5af922722f | ||

|

|

b70d730b32 | ||

|

|

bf4b856e0c | ||

|

|

0cf0ae6755 | ||

|

|

29061cff39 | ||

|

|

b7eec4c89f | ||

|

|

a3854c229e | ||

|

|

dcde256433 | ||

|

|

931bdbd5cd | ||

|

|

b7bd59c344 | ||

|

|

2dbf9a6017 | ||

|

|

fe93bba457 | ||

|

|

6e35f54738 | ||

|

|

089294a85e | ||

|

|

25c0b44641 | ||

|

|

58c1589688 | ||

|

|

bb53f69016 | ||

|

|

75659ca042 | ||

|

|

fc00594ea4 | ||

|

|

8d26be8b89 | ||

|

|

af4e95ae0f | ||

|

|

ffb4a7aa78 | ||

|

|

dcaeacc507 | ||

|

|

4f377e6710 | ||

|

|

122db85727 | ||

|

|

a598e4aa74 | ||

|

|

733b31ebbd | ||

|

|

dac9775de0 | ||

|

|

46c19a5783 | ||

|

|

aaeb5ba52f | ||

|

|

9f5a3d6064 | ||

|

|

4cdf873f98 |

@@ -1,2 +1,5 @@

|

|||||||

ignore:

|

ignore:

|

||||||

- "src/bin"

|

- "src/bin"

|

||||||

|

coverage:

|

||||||

|

status:

|

||||||

|

patch: off

|

||||||

|

|||||||

36

.travis.yml

36

.travis.yml

@@ -1,36 +0,0 @@

|

|||||||

language: rust

|

|

||||||

required: sudo

|

|

||||||

services:

|

|

||||||

- docker

|

|

||||||

matrix:

|

|

||||||

allow_failures:

|

|

||||||

- rust: nightly

|

|

||||||

include:

|

|

||||||

- rust: stable

|

|

||||||

- rust: nightly

|

|

||||||

env:

|

|

||||||

- FEATURES='unstable'

|

|

||||||

before_script: |

|

|

||||||

export PATH="$PATH:$HOME/.cargo/bin"

|

|

||||||

rustup component add rustfmt-preview

|

|

||||||

script:

|

|

||||||

- cargo fmt -- --write-mode=diff

|

|

||||||

- cargo build --verbose --features "$FEATURES"

|

|

||||||

- cargo test --verbose --features "$FEATURES"

|

|

||||||

after_success: |

|

|

||||||

docker run -it --rm --security-opt seccomp=unconfined --volume "$PWD:/volume" elmtai/docker-rust-kcov

|

|

||||||

bash <(curl -s https://codecov.io/bash) -s target/cov

|

|

||||||

before_deploy:

|

|

||||||

- cargo package

|

|

||||||

deploy:

|

|

||||||

provider: releases

|

|

||||||

api-key:

|

|

||||||

secure: j3cPAbOuGjXuSl+j+JL/4GWxD6dA0/f5NQ0Od4LBVewPmnKiqimGOJ1xj3eKth+ZzwuCpcHwBIIR54NEDSJgHaYDXiukc05qCeToIPqOc0wGJ+GcUrWAy8M7Wo981I/0SVYDAnLv4+ivvJxYE7b2Jr3pHsQAzH7ClY8g2xu9HlNkScEsc4cizA9Sf3zIqtIoi480vxtQ5ghGOUCkwZuG3+Dg+IGnnjvE4qQOYey1del+KIDkmbHjry7iFWPF6fWK2187JNt6XiO2/2tZt6BkMEmdRnkw1r/wL9tj0AbqLgyBjzlI4QQfkBwsuX3ZFeNGArn71s7WmAUGyVOl0DJXfwN/BEUxMTd+lkMjuMNUxaU/hxVZ7zAWH55KJK+qf6B95DLVWr7ypjfJLLBcds+JfkBNoReWLM1XoDUKAU+wBf1b+PKiywNfNascjZTcz6QGe94sa7l/T4PxtHDSREmflFgu1Hysg61WuODDwTTHGrsg9ZuvlINnqQhXsJo9r9+TMIGwwWHcvLQDNz2TPALCfcLtd+RsevdOeXItYa0KD3D4gKGv36bwAVDpCIoZnSeiaT/PUyjilFtJjBpKz9BbOKgOtQhHGrHucn0WOF+bu/t3SFaJKQf/W+hLwO3NV8yiL5LQyHVm/TPY62nBfne2KEqi/LOFxgKG35aACouP0ig=

|

|

||||||

file: target/package/solana-$TRAVIS_TAG.crate

|

|

||||||

skip_cleanup: true

|

|

||||||

on:

|

|

||||||

tags: true

|

|

||||||

condition: "$TRAVIS_RUST_VERSION = stable"

|

|

||||||

|

|

||||||

after_deploy:

|

|

||||||

- cargo publish --token "$CRATES_IO_TOKEN"

|

|

||||||

41

Cargo.toml

41

Cargo.toml

@@ -1,9 +1,10 @@

|

|||||||

[package]

|

[package]

|

||||||

name = "solana"

|

name = "solana"

|

||||||

description = "The World's Fastest Blockchain"

|

description = "Blockchain, Rebuilt for Scale"

|

||||||

version = "0.6.0-alpha"

|

version = "0.7.0-alpha"

|

||||||

documentation = "https://docs.rs/solana"

|

documentation = "https://docs.rs/solana"

|

||||||

homepage = "http://solana.com/"

|

homepage = "http://solana.com/"

|

||||||

|

readme = "README.md"

|

||||||

repository = "https://github.com/solana-labs/solana"

|

repository = "https://github.com/solana-labs/solana"

|

||||||

authors = [

|

authors = [

|

||||||

"Anatoly Yakovenko <anatoly@solana.com>",

|

"Anatoly Yakovenko <anatoly@solana.com>",

|

||||||

@@ -17,12 +18,12 @@ name = "solana-client-demo"

|

|||||||

path = "src/bin/client-demo.rs"

|

path = "src/bin/client-demo.rs"

|

||||||

|

|

||||||

[[bin]]

|

[[bin]]

|

||||||

name = "solana-multinode-demo"

|

name = "solana-fullnode"

|

||||||

path = "src/bin/multinode-demo.rs"

|

path = "src/bin/fullnode.rs"

|

||||||

|

|

||||||

[[bin]]

|

[[bin]]

|

||||||

name = "solana-testnode"

|

name = "solana-fullnode-config"

|

||||||

path = "src/bin/testnode.rs"

|

path = "src/bin/fullnode-config.rs"

|

||||||

|

|

||||||

[[bin]]

|

[[bin]]

|

||||||

name = "solana-genesis"

|

name = "solana-genesis"

|

||||||

@@ -40,6 +41,10 @@ path = "src/bin/mint.rs"

|

|||||||

name = "solana-mint-demo"

|

name = "solana-mint-demo"

|

||||||

path = "src/bin/mint-demo.rs"

|

path = "src/bin/mint-demo.rs"

|

||||||

|

|

||||||

|

[[bin]]

|

||||||

|

name = "solana-drone"

|

||||||

|

path = "src/bin/drone.rs"

|

||||||

|

|

||||||

[badges]

|

[badges]

|

||||||

codecov = { repository = "solana-labs/solana", branch = "master", service = "github" }

|

codecov = { repository = "solana-labs/solana", branch = "master", service = "github" }

|

||||||

|

|

||||||

@@ -52,7 +57,7 @@ erasure = []

|

|||||||

[dependencies]

|

[dependencies]

|

||||||

rayon = "1.0.0"

|

rayon = "1.0.0"

|

||||||

sha2 = "0.7.0"

|

sha2 = "0.7.0"

|

||||||

generic-array = { version = "0.9.0", default-features = false, features = ["serde"] }

|

generic-array = { version = "0.11.1", default-features = false, features = ["serde"] }

|

||||||

serde = "1.0.27"

|

serde = "1.0.27"

|

||||||

serde_derive = "1.0.27"

|

serde_derive = "1.0.27"

|

||||||

serde_json = "1.0.10"

|

serde_json = "1.0.10"

|

||||||

@@ -60,13 +65,15 @@ ring = "0.12.1"

|

|||||||

untrusted = "0.5.1"

|

untrusted = "0.5.1"

|

||||||

bincode = "1.0.0"

|

bincode = "1.0.0"

|

||||||

chrono = { version = "0.4.0", features = ["serde"] }

|

chrono = { version = "0.4.0", features = ["serde"] }

|

||||||

log = "^0.4.1"

|

log = "0.4.2"

|

||||||

env_logger = "^0.4.1"

|

env_logger = "0.5.10"

|

||||||

matches = "^0.1.6"

|

matches = "0.1.6"

|

||||||

byteorder = "^1.2.1"

|

byteorder = "1.2.1"

|

||||||

libc = "^0.2.1"

|

libc = "0.2.1"

|

||||||

getopts = "^0.2"

|

getopts = "0.2"

|

||||||

isatty = "0.1"

|

atty = "0.2"

|

||||||

futures = "0.1"

|

rand = "0.5.1"

|

||||||

rand = "0.4.2"

|

pnet_datalink = "0.21.0"

|

||||||

pnet = "^0.21.0"

|

tokio = "0.1"

|

||||||

|

tokio-codec = "0.1"

|

||||||

|

tokio-io = "0.1"

|

||||||

|

|||||||

2

LICENSE

2

LICENSE

@@ -1,4 +1,4 @@

|

|||||||

Copyright 2018 Anatoly Yakovenko, Greg Fitzgerald and Stephen Akridge

|

Copyright 2018 Solana Labs, Inc.

|

||||||

|

|

||||||

Licensed under the Apache License, Version 2.0 (the "License");

|

Licensed under the Apache License, Version 2.0 (the "License");

|

||||||

you may not use this file except in compliance with the License.

|

you may not use this file except in compliance with the License.

|

||||||

|

|||||||

190

README.md

190

README.md

@@ -1,27 +1,42 @@

|

|||||||

[](https://crates.io/crates/solana)

|

[](https://crates.io/crates/solana)

|

||||||

[](https://docs.rs/solana)

|

[](https://docs.rs/solana)

|

||||||

[](https://buildkite.com/solana-labs/solana)

|

[](https://solana-ci-gate.herokuapp.com/buildkite_public_log?https://buildkite.com/solana-labs/solana/builds/latest/master)

|

||||||

[](https://codecov.io/gh/solana-labs/solana)

|

[](https://codecov.io/gh/solana-labs/solana)

|

||||||

|

|

||||||

|

Blockchain, Rebuilt for Scale

|

||||||

|

===

|

||||||

|

|

||||||

|

Solana™ is a new blockchain architecture built from the ground up for scale. The architecture supports

|

||||||

|

up to 710 thousand transactions per second on a gigabit network.

|

||||||

|

|

||||||

Disclaimer

|

Disclaimer

|

||||||

===

|

===

|

||||||

|

|

||||||

All claims, content, designs, algorithms, estimates, roadmaps, specifications, and performance measurements described in this project are done with the author's best effort. It is up to the reader to check and validate their accuracy and truthfulness. Furthermore nothing in this project constitutes a solicitation for investment.

|

All claims, content, designs, algorithms, estimates, roadmaps, specifications, and performance measurements described in this project are done with the author's best effort. It is up to the reader to check and validate their accuracy and truthfulness. Furthermore nothing in this project constitutes a solicitation for investment.

|

||||||

|

|

||||||

Solana: High Performance Blockchain

|

|

||||||

===

|

|

||||||

|

|

||||||

Solana™ is a new architecture for a high performance blockchain. It aims to support

|

|

||||||

over 700 thousand transactions per second on a gigabit network.

|

|

||||||

|

|

||||||

Introduction

|

Introduction

|

||||||

===

|

===

|

||||||

|

|

||||||

It's possible for a centralized database to process 710,000 transactions per second on a standard gigabit network if the transactions are, on average, no more than 178 bytes. A centralized database can also replicate itself and maintain high availability without significantly compromising that transaction rate using the distributed system technique known as Optimistic Concurrency Control [H.T.Kung, J.T.Robinson (1981)]. At Solana, we're demonstrating that these same theoretical limits apply just as well to blockchain on an adversarial network. The key ingredient? Finding a way to share time when nodes can't trust one-another. Once nodes can trust time, suddenly ~40 years of distributed systems research becomes applicable to blockchain! Furthermore, and much to our surprise, it can implemented using a mechanism that has existed in Bitcoin since day one. The Bitcoin feature is called nLocktime and it can be used to postdate transactions using block height instead of a timestamp. As a Bitcoin client, you'd use block height instead of a timestamp if you don't trust the network. Block height turns out to be an instance of what's being called a Verifiable Delay Function in cryptography circles. It's a cryptographically secure way to say time has passed. In Solana, we use a far more granular verifiable delay function, a SHA 256 hash chain, to checkpoint the ledger and coordinate consensus. With it, we implement Optimistic Concurrency Control and are now well in route towards that theoretical limit of 710,000 transactions per second.

|

It's possible for a centralized database to process 710,000 transactions per second on a standard gigabit network if the transactions are, on average, no more than 176 bytes. A centralized database can also replicate itself and maintain high availability without significantly compromising that transaction rate using the distributed system technique known as Optimistic Concurrency Control [H.T.Kung, J.T.Robinson (1981)]. At Solana, we're demonstrating that these same theoretical limits apply just as well to blockchain on an adversarial network. The key ingredient? Finding a way to share time when nodes can't trust one-another. Once nodes can trust time, suddenly ~40 years of distributed systems research becomes applicable to blockchain! Furthermore, and much to our surprise, it can implemented using a mechanism that has existed in Bitcoin since day one. The Bitcoin feature is called nLocktime and it can be used to postdate transactions using block height instead of a timestamp. As a Bitcoin client, you'd use block height instead of a timestamp if you don't trust the network. Block height turns out to be an instance of what's being called a Verifiable Delay Function in cryptography circles. It's a cryptographically secure way to say time has passed. In Solana, we use a far more granular verifiable delay function, a SHA 256 hash chain, to checkpoint the ledger and coordinate consensus. With it, we implement Optimistic Concurrency Control and are now well in route towards that theoretical limit of 710,000 transactions per second.

|

||||||

|

|

||||||

Running the demo

|

|

||||||

|

Testnet Demos

|

||||||

===

|

===

|

||||||

|

|

||||||

|

The Solana repo contains all the scripts you might need to spin up your own

|

||||||

|

local testnet. Depending on what you're looking to achieve, you may want to

|

||||||

|

run a different variation, as the full-fledged, performance-enhanced

|

||||||

|

multinode testnet is considerably more complex to set up than a Rust-only,

|

||||||

|

singlenode testnode. If you are looking to develop high-level features, such

|

||||||

|

as experimenting with smart contracts, save yourself some setup headaches and

|

||||||

|

stick to the Rust-only singlenode demo. If you're doing performance optimization

|

||||||

|

of the transaction pipeline, consider the enhanced singlenode demo. If you're

|

||||||

|

doing consensus work, you'll need at least a Rust-only multinode demo. If you want

|

||||||

|

to reproduce our TPS metrics, run the enhanced multinode demo.

|

||||||

|

|

||||||

|

For all four variations, you'd need the latest Rust toolchain and the Solana

|

||||||

|

source code:

|

||||||

|

|

||||||

First, install Rust's package manager Cargo.

|

First, install Rust's package manager Cargo.

|

||||||

|

|

||||||

```bash

|

```bash

|

||||||

@@ -36,58 +51,107 @@ $ git clone https://github.com/solana-labs/solana.git

|

|||||||

$ cd solana

|

$ cd solana

|

||||||

```

|

```

|

||||||

|

|

||||||

The testnode server is initialized with a ledger from stdin and

|

The demo code is sometimes broken between releases as we add new low-level

|

||||||

generates new ledger entries on stdout. To create the input ledger, we'll need

|

features, so if this is your first time running the demo, you'll improve

|

||||||

to create *the mint* and use it to generate a *genesis ledger*. It's done in

|

your odds of success if you check out the

|

||||||

two steps because the mint-demo.json file contains private keys that will be

|

[latest release](https://github.com/solana-labs/solana/releases)

|

||||||

used later in this demo.

|

before proceeding:

|

||||||

|

|

||||||

```bash

|

```bash

|

||||||

$ echo 1000000000 | cargo run --release --bin solana-mint-demo > mint-demo.json

|

$ git checkout v0.6.1

|

||||||

$ cat mint-demo.json | cargo run --release --bin solana-genesis-demo > genesis.log

|

|

||||||

```

|

```

|

||||||

|

|

||||||

Now you can start the server:

|

Configuration Setup

|

||||||

|

---

|

||||||

|

|

||||||

|

The network is initialized with a genesis ledger and leader/validator configuration files.

|

||||||

|

These files can be generated by running the following script.

|

||||||

|

|

||||||

```bash

|

```bash

|

||||||

$ cat genesis.log | cargo run --release --bin solana-testnode > transactions0.log

|

$ ./multinode-demo/setup.sh

|

||||||

```

|

```

|

||||||

|

|

||||||

Wait a few seconds for the server to initialize. It will print "Ready." when it's safe

|

Singlenode Testnet

|

||||||

to start sending it transactions.

|

---

|

||||||

|

|

||||||

Then, in a separate shell, let's execute some transactions. Note we pass in

|

Before you start a fullnode, make sure you know the IP address of the machine you

|

||||||

|

want to be the leader for the demo, and make sure that udp ports 8000-10000 are

|

||||||

|

open on all the machines you want to test with.

|

||||||

|

|

||||||

|

Now start the server:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

$ ./multinode-demo/leader.sh

|

||||||

|

```

|

||||||

|

|

||||||

|

To run a performance-enhanced fullnode on Linux, download `libcuda_verify_ed25519.a`. Enable

|

||||||

|

it by adding `--features=cuda` to the line that runs `solana-fullnode` in

|

||||||

|

`leader.sh`. [CUDA 9.2](https://developer.nvidia.com/cuda-downloads) must be installed on your system.

|

||||||

|

|

||||||

|

```bash

|

||||||

|

$ ./fetch-perf-libs.sh

|

||||||

|

$ cargo run --release --features=cuda --bin solana-fullnode -- -l leader.json < genesis.log

|

||||||

|

```

|

||||||

|

|

||||||

|

Wait a few seconds for the server to initialize. It will print "Ready." when it's ready to

|

||||||

|

receive transactions.

|

||||||

|

|

||||||

|

Multinode Testnet

|

||||||

|

---

|

||||||

|

|

||||||

|

To run a multinode testnet, after starting a leader node, spin up some validator nodes:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

$ ./multinode-demo/validator.sh ubuntu@10.0.1.51:~/solana #The leader machine

|

||||||

|

```

|

||||||

|

|

||||||

|

As with the leader node, you can run a performance-enhanced validator fullnode by adding

|

||||||

|

`--features=cuda` to the line that runs `solana-fullnode` in `validator.sh`.

|

||||||

|

|

||||||

|

```bash

|

||||||

|

$ cargo run --release --features=cuda --bin solana-fullnode -- -l validator.json -v leader.json < genesis.log

|

||||||

|

```

|

||||||

|

|

||||||

|

|

||||||

|

Testnet Client Demo

|

||||||

|

---

|

||||||

|

|

||||||

|

Now that your singlenode or multinode testnet is up and running, in a separate shell, let's send it some transactions! Note we pass in

|

||||||

the JSON configuration file here, not the genesis ledger.

|

the JSON configuration file here, not the genesis ledger.

|

||||||

|

|

||||||

```bash

|

```bash

|

||||||

$ cat mint-demo.json | cargo run --release --bin solana-client-demo

|

$ ./multinode-demo/client.sh ubuntu@10.0.1.51:~/solana 2 #The leader machine and the total number of nodes in the network

|

||||||

```

|

```

|

||||||

|

|

||||||

Now kill the server with Ctrl-C, and take a look at the ledger. You should

|

What just happened? The client demo spins up several threads to send 500,000 transactions

|

||||||

see something similar to:

|

to the testnet as quickly as it can. The client then pings the testnet periodically to see

|

||||||

|

how many transactions it processed in that time. Take note that the demo intentionally

|

||||||

```json

|

floods the network with UDP packets, such that the network will almost certainly drop a

|

||||||

{"num_hashes":27,"id":[0, "..."],"event":"Tick"}

|

bunch of them. This ensures the testnet has an opportunity to reach 710k TPS. The client

|

||||||

{"num_hashes":3,"id":[67, "..."],"event":{"Transaction":{"tokens":42}}}

|

demo completes after it has convinced itself the testnet won't process any additional

|

||||||

{"num_hashes":27,"id":[0, "..."],"event":"Tick"}

|

transactions. You should see several TPS measurements printed to the screen. In the

|

||||||

```

|

multinode variation, you'll see TPS measurements for each validator node as well.

|

||||||

|

|

||||||

Now restart the server from where we left off. Pass it both the genesis ledger, and

|

|

||||||

the transaction ledger.

|

|

||||||

|

|

||||||

|

Linux Snap

|

||||||

|

---

|

||||||

|

A Linux [Snap](https://snapcraft.io/) is available, which can be used to

|

||||||

|

easily get Solana running on supported Linux systems without building anything

|

||||||

|

from source. The `edge` Snap channel is updated daily with the latest

|

||||||

|

development from the `master` branch. To install:

|

||||||

```bash

|

```bash

|

||||||

$ cat genesis.log transactions0.log | cargo run --release --bin solana-testnode > transactions1.log

|

$ sudo snap install solana --edge --devmode

|

||||||

```

|

```

|

||||||

|

(`--devmode` flag is required only for `solana.fullnode-cuda`)

|

||||||

|

|

||||||

Lastly, run the client demo again, and verify that all funds were spent in the

|

Once installed the usual Solana programs will be available as `solona.*` instead

|

||||||

previous round, and so no additional transactions are added.

|

of `solana-*`. For example, `solana.fullnode` instead of `solana-fullnode`.

|

||||||

|

|

||||||

|

Update to the latest version at any time with

|

||||||

```bash

|

```bash

|

||||||

$ cat mint-demo.json | cargo run --release --bin solana-client-demo

|

$ snap info solana

|

||||||

|

$ sudo snap refresh solana --devmode

|

||||||

```

|

```

|

||||||

|

|

||||||

Stop the server again, and verify there are only Tick entries, and no Transaction entries.

|

|

||||||

|

|

||||||

Developing

|

Developing

|

||||||

===

|

===

|

||||||

|

|

||||||

@@ -102,7 +166,7 @@ $ source $HOME/.cargo/env

|

|||||||

$ rustup component add rustfmt-preview

|

$ rustup component add rustfmt-preview

|

||||||

```

|

```

|

||||||

|

|

||||||

If your rustc version is lower than 1.25.0, please update it:

|

If your rustc version is lower than 1.26.1, please update it:

|

||||||

|

|

||||||

```bash

|

```bash

|

||||||

$ rustup update

|

$ rustup update

|

||||||

@@ -121,21 +185,37 @@ Testing

|

|||||||

Run the test suite:

|

Run the test suite:

|

||||||

|

|

||||||

```bash

|

```bash

|

||||||

cargo test

|

$ cargo test

|

||||||

|

```

|

||||||

|

|

||||||

|

To emulate all the tests that will run on a Pull Request, run:

|

||||||

|

```bash

|

||||||

|

$ ./ci/run-local.sh

|

||||||

```

|

```

|

||||||

|

|

||||||

Debugging

|

Debugging

|

||||||

---

|

---

|

||||||

|

|

||||||

There are some useful debug messages in the code, you can enable them on a per-module and per-level

|

There are some useful debug messages in the code, you can enable them on a per-module and per-level

|

||||||

basis with the normal RUST\_LOG environment variable. Run the testnode with this syntax:

|

basis with the normal RUST\_LOG environment variable. Run the fullnode with this syntax:

|

||||||

```bash

|

```bash

|

||||||

$ RUST_LOG=solana::streamer=debug,solana::accountant_skel=info cat genesis.log | ./target/release/solana-testnode > transactions0.log

|

$ RUST_LOG=solana::streamer=debug,solana::server=info cat genesis.log | ./target/release/solana-fullnode > transactions0.log

|

||||||

```

|

```

|

||||||

to see the debug and info sections for streamer and accountant\_skel respectively. Generally

|

to see the debug and info sections for streamer and server respectively. Generally

|

||||||

we are using debug for infrequent debug messages, trace for potentially frequent messages and

|

we are using debug for infrequent debug messages, trace for potentially frequent messages and

|

||||||

info for performance-related logging.

|

info for performance-related logging.

|

||||||

|

|

||||||

|

Attaching to a running process with gdb

|

||||||

|

|

||||||

|

```

|

||||||

|

$ sudo gdb

|

||||||

|

attach <PID>

|

||||||

|

set logging on

|

||||||

|

thread apply all bt

|

||||||

|

```

|

||||||

|

|

||||||

|

This will dump all the threads stack traces into gdb.txt

|

||||||

|

|

||||||

Benchmarking

|

Benchmarking

|

||||||

---

|

---

|

||||||

|

|

||||||

@@ -151,22 +231,26 @@ Run the benchmarks:

|

|||||||

$ cargo +nightly bench --features="unstable"

|

$ cargo +nightly bench --features="unstable"

|

||||||

```

|

```

|

||||||

|

|

||||||

To run the benchmarks on Linux with GPU optimizations enabled:

|

|

||||||

|

|

||||||

```bash

|

|

||||||

$ wget https://solana-build-artifacts.s3.amazonaws.com/v0.5.0/libcuda_verify_ed25519.a

|

|

||||||

$ cargo +nightly bench --features="unstable,cuda"

|

|

||||||

```

|

|

||||||

|

|

||||||

Code coverage

|

Code coverage

|

||||||

---

|

---

|

||||||

|

|

||||||

To generate code coverage statistics, run kcov via Docker:

|

To generate code coverage statistics, install cargo-cov. Note: the tool currently only works

|

||||||

|

in Rust nightly.

|

||||||

|

|

||||||

```bash

|

```bash

|

||||||

$ docker run -it --rm --security-opt seccomp=unconfined --volume "$PWD:/volume" elmtai/docker-rust-kcov

|

$ cargo +nightly install cargo-cov

|

||||||

```

|

```

|

||||||

|

|

||||||

|

Run cargo-cov and generate a report:

|

||||||

|

|

||||||

|

```bash

|

||||||

|

$ cargo +nightly cov test

|

||||||

|

$ cargo +nightly cov report --open

|

||||||

|

```

|

||||||

|

|

||||||

|

The coverage report will be written to `./target/cov/report/index.html`

|

||||||

|

|

||||||

|

|

||||||

Why coverage? While most see coverage as a code quality metric, we see it primarily as a developer

|

Why coverage? While most see coverage as a code quality metric, we see it primarily as a developer

|

||||||

productivity metric. When a developer makes a change to the codebase, presumably it's a *solution* to

|

productivity metric. When a developer makes a change to the codebase, presumably it's a *solution* to

|

||||||

some problem. Our unit-test suite is how we encode the set of *problems* the codebase solves. Running

|

some problem. Our unit-test suite is how we encode the set of *problems* the codebase solves. Running

|

||||||

|

|||||||

1

build.rs

1

build.rs

@@ -11,5 +11,6 @@ fn main() {

|

|||||||

}

|

}

|

||||||

if !env::var("CARGO_FEATURE_ERASURE").is_err() {

|

if !env::var("CARGO_FEATURE_ERASURE").is_err() {

|

||||||

println!("cargo:rustc-link-lib=dylib=Jerasure");

|

println!("cargo:rustc-link-lib=dylib=Jerasure");

|

||||||

|

println!("cargo:rustc-link-lib=dylib=gf_complete");

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|||||||

3

ci/.gitignore

vendored

Normal file

3

ci/.gitignore

vendored

Normal file

@@ -0,0 +1,3 @@

|

|||||||

|

/node_modules/

|

||||||

|

/package-lock.json

|

||||||

|

/snapcraft.credentials

|

||||||

88

ci/README.md

Normal file

88

ci/README.md

Normal file

@@ -0,0 +1,88 @@

|

|||||||

|

|

||||||

|

Our CI infrastructure is built around [BuildKite](https://buildkite.com) with some

|

||||||

|

additional GitHub integration provided by https://github.com/mvines/ci-gate

|

||||||

|

|

||||||

|

## Agent Queues

|

||||||

|

|

||||||

|

We define two [Agent Queues](https://buildkite.com/docs/agent/v3/queues):

|

||||||

|

`queue=default` and `queue=cuda`. The `default` queue should be favored and

|

||||||

|

runs on lower-cost CPU instances. The `cuda` queue is only necessary for

|

||||||

|

running **tests** that depend on GPU (via CUDA) access -- CUDA builds may still

|

||||||

|

be run on the `default` queue, and the [buildkite artifact

|

||||||

|

system](https://buildkite.com/docs/builds/artifacts) used to transfer build

|

||||||

|

products over to a GPU instance for testing.

|

||||||

|

|

||||||

|

## Buildkite Agent Management

|

||||||

|

|

||||||

|

### Buildkite GCP Setup

|

||||||

|

|

||||||

|

CI runs on Google Cloud Platform via two Compute Engine Instance groups:

|

||||||

|

`ci-default` and `ci-cuda`. Autoscaling is currently disabled and the number of

|

||||||

|

VM Instances in each group is manually adjusted.

|

||||||

|

|

||||||

|

#### Updating a CI Disk Image

|

||||||

|

|

||||||

|

Each Instance group has its own disk image, `ci-default-vX` and

|

||||||

|

`ci-cuda-vY`, where *X* and *Y* are incremented each time the image is changed.

|

||||||

|

|

||||||

|

The process to update a disk image is as follows (TODO: make this less manual):

|

||||||

|

|

||||||

|

1. Create a new VM Instance using the disk image to modify.

|

||||||

|

2. Once the VM boots, ssh to it and modify the disk as desired.

|

||||||

|

3. Stop the VM Instance running the modified disk. Remember the name of the VM disk

|

||||||

|

4. From another machine, `gcloud auth login`, then create a new Disk Image based

|

||||||

|

off the modified VM Instance:

|

||||||

|

```

|

||||||

|

$ gcloud compute images create ci-default-v5 --source-disk xxx --source-disk-zone us-east1-b

|

||||||

|

```

|

||||||

|

or

|

||||||

|

```

|

||||||

|

$ gcloud compute images create ci-cuda-v5 --source-disk xxx --source-disk-zone us-east1-b

|

||||||

|

```

|

||||||

|

5. Delete the new VM instance.

|

||||||

|

6. Go to the Instance templates tab, find the existing template named

|

||||||

|

`ci-default-vX` or `ci-cuda-vY` and select it. Use the "Copy" button to create

|

||||||

|

a new Instance template called `ci-default-vX+1` or `ci-cuda-vY+1` with the

|

||||||

|

newly created Disk image.

|

||||||

|

7. Go to the Instance Groups tag and find the applicable group, `ci-default` or

|

||||||

|

`ci-cuda`. Edit the Instance Group in two steps: (a) Set the number of

|

||||||

|

instances to 0 and wait for them all to terminate, (b) Update the Instance

|

||||||

|

template and restore the number of instances to the original value.

|

||||||

|

8. Clean up the previous version by deleting it from Instance Templates and

|

||||||

|

Images.

|

||||||

|

|

||||||

|

|

||||||

|

## Reference

|

||||||

|

|

||||||

|

### Buildkite AWS CloudFormation Setup

|

||||||

|

|

||||||

|

**AWS CloudFormation is currently inactive, although it may be restored in the

|

||||||

|

future**

|

||||||

|

|

||||||

|

AWS CloudFormation can be used to scale machines up and down based on the

|

||||||

|

current CI load. If no machine is currently running it can take up to 60

|

||||||

|

seconds to spin up a new instance, please remain calm during this time.

|

||||||

|

|

||||||

|

#### AMI

|

||||||

|

We use a custom AWS AMI built via https://github.com/solana-labs/elastic-ci-stack-for-aws/tree/solana/cuda.

|

||||||

|

|

||||||

|

Use the following process to update this AMI as dependencies change:

|

||||||

|

```bash

|

||||||

|

$ export AWS_ACCESS_KEY_ID=my_access_key

|

||||||

|

$ export AWS_SECRET_ACCESS_KEY=my_secret_access_key

|

||||||

|

$ git clone https://github.com/solana-labs/elastic-ci-stack-for-aws.git -b solana/cuda

|

||||||

|

$ cd elastic-ci-stack-for-aws/

|

||||||

|

$ make build

|

||||||

|

$ make build-ami

|

||||||

|

```

|

||||||

|

|

||||||

|

Watch for the *"amazon-ebs: AMI:"* log message to extract the name of the new

|

||||||

|

AMI. For example:

|

||||||

|

```

|

||||||

|

amazon-ebs: AMI: ami-07118545e8b4ce6dc

|

||||||

|

```

|

||||||

|

The new AMI should also now be visible in your EC2 Dashboard. Go to the desired

|

||||||

|

AWS CloudFormation stack, update the **ImageId** field to the new AMI id, and

|

||||||

|

*apply* the stack changes.

|

||||||

|

|

||||||

|

|

||||||

@@ -1,37 +1,31 @@

|

|||||||

steps:

|

steps:

|

||||||

- command: "ci/coverage.sh || true"

|

- command: "ci/docker-run.sh rust ci/test-stable.sh"

|

||||||

label: "coverage"

|

name: "stable [public]"

|

||||||

# TODO: Run coverage in a docker image rather than assuming kcov/cargo-kcov

|

timeout_in_minutes: 20

|

||||||

# is installed on the build agent...

|

- command: "ci/shellcheck.sh"

|

||||||

#plugins:

|

name: "shellcheck [public]"

|

||||||

# docker#v1.1.1:

|

timeout_in_minutes: 20

|

||||||

# image: "rust"

|

|

||||||

# user: "998:997" # buildkite-agent:buildkite-agent

|

|

||||||

# environment:

|

|

||||||

# - CODECOV_TOKEN=$CODECOV_TOKEN

|

|

||||||

- command: "ci/test-stable.sh"

|

|

||||||

label: "stable [public]"

|

|

||||||

plugins:

|

|

||||||

docker#v1.1.1:

|

|

||||||

image: "rust"

|

|

||||||

user: "998:997" # buildkite-agent:buildkite-agent

|

|

||||||

- command: "ci/test-nightly.sh || true"

|

|

||||||

label: "nightly - FAILURES IGNORED [public]"

|

|

||||||

plugins:

|

|

||||||

docker#v1.1.1:

|

|

||||||

image: "rustlang/rust:nightly"

|

|

||||||

user: "998:997" # buildkite-agent:buildkite-agent

|

|

||||||

- command: "ci/test-ignored.sh || true"

|

|

||||||

label: "ignored - FAILURES IGNORED [public]"

|

|

||||||

- command: "ci/test-cuda.sh"

|

|

||||||

label: "cuda"

|

|

||||||

- wait

|

- wait

|

||||||

- command: "ci/publish.sh"

|

- command: "ci/docker-run.sh rustlang/rust:nightly ci/test-nightly.sh"

|

||||||

label: "publish release artifacts"

|

name: "nightly [public]"

|

||||||

plugins:

|

timeout_in_minutes: 20

|

||||||

docker#v1.1.1:

|

- command: "ci/test-stable-perf.sh"

|

||||||

image: "rust"

|

name: "stable-perf [public]"

|

||||||

user: "998:997" # buildkite-agent:buildkite-agent

|

timeout_in_minutes: 20

|

||||||

environment:

|

retry:

|

||||||

- BUILDKITE_TAG=$BUILDKITE_TAG

|

automatic:

|

||||||

- CRATES_IO_TOKEN=$CRATES_IO_TOKEN

|

- exit_status: "*"

|

||||||

|

limit: 2

|

||||||

|

agents:

|

||||||

|

- "queue=cuda"

|

||||||

|

- command: "ci/snap.sh [public]"

|

||||||

|

timeout_in_minutes: 20

|

||||||

|

name: "snap [public]"

|

||||||

|

- wait

|

||||||

|

- command: "ci/publish-crate.sh [public]"

|

||||||

|

timeout_in_minutes: 20

|

||||||

|

name: "publish crate"

|

||||||

|

- command: "ci/hoover.sh [public]"

|

||||||

|

timeout_in_minutes: 20

|

||||||

|

name: "clean agent"

|

||||||

|

|

||||||

|

|||||||

@@ -1,25 +0,0 @@

|

|||||||

#!/bin/bash -e

|

|

||||||

|

|

||||||

cd $(dirname $0)/..

|

|

||||||

|

|

||||||

if [[ -r ~/.cargo/env ]]; then

|

|

||||||

# Pick up local install of kcov/cargo-kcov

|

|

||||||

source ~/.cargo/env

|

|

||||||

fi

|

|

||||||

|

|

||||||

rustc --version

|

|

||||||

cargo --version

|

|

||||||

kcov --version

|

|

||||||

cargo-kcov --version

|

|

||||||

|

|

||||||

export RUST_BACKTRACE=1

|

|

||||||

cargo build

|

|

||||||

cargo kcov

|

|

||||||

|

|

||||||

if [[ -z "$CODECOV_TOKEN" ]]; then

|

|

||||||

echo CODECOV_TOKEN undefined

|

|

||||||

exit 1

|

|

||||||

fi

|

|

||||||

|

|

||||||

bash <(curl -s https://codecov.io/bash)

|

|

||||||

exit 0

|

|

||||||

50

ci/docker-run.sh

Executable file

50

ci/docker-run.sh

Executable file

@@ -0,0 +1,50 @@

|

|||||||

|

#!/bin/bash -e

|

||||||

|

|

||||||

|

usage() {

|

||||||

|

echo "Usage: $0 [docker image name] [command]"

|

||||||

|

echo

|

||||||

|

echo Runs command in the specified docker image with

|

||||||

|

echo a CI-appropriate environment

|

||||||

|

echo

|

||||||

|

}

|

||||||

|

|

||||||

|

cd "$(dirname "$0")/.."

|

||||||

|

|

||||||

|

IMAGE="$1"

|

||||||

|

if [[ -z "$IMAGE" ]]; then

|

||||||

|

echo Error: image not defined

|

||||||

|

exit 1

|

||||||

|

fi

|

||||||

|

|

||||||

|

docker pull "$IMAGE"

|

||||||

|

shift

|

||||||

|

|

||||||

|

ARGS=(

|

||||||

|

--workdir /solana

|

||||||

|

--volume "$PWD:/solana"

|

||||||

|

--env "HOME=/solana"

|

||||||

|

--rm

|

||||||

|

)

|

||||||

|

|

||||||

|

ARGS+=(--env "CARGO_HOME=/solana/.cargo")

|

||||||

|

|

||||||

|

# kcov tries to set the personality of the binary which docker

|

||||||

|

# doesn't allow by default.

|

||||||

|

ARGS+=(--security-opt "seccomp=unconfined")

|

||||||

|

|

||||||

|

# Ensure files are created with the current host uid/gid

|

||||||

|

if [[ -z "$SOLANA_DOCKER_RUN_NOSETUID" ]]; then

|

||||||

|

ARGS+=(--user "$(id -u):$(id -g)")

|

||||||

|

fi

|

||||||

|

|

||||||

|

# Environment variables to propagate into the container

|

||||||

|

ARGS+=(

|

||||||

|

--env BUILDKITE_BRANCH

|

||||||

|

--env BUILDKITE_TAG

|

||||||

|

--env CODECOV_TOKEN

|

||||||

|

--env CRATES_IO_TOKEN

|

||||||

|

--env SNAPCRAFT_CREDENTIALS_KEY

|

||||||

|

)

|

||||||

|

|

||||||

|

set -x

|

||||||

|

exec docker run "${ARGS[@]}" "$IMAGE" "$@"

|

||||||

7

ci/docker-snapcraft/Dockerfile

Normal file

7

ci/docker-snapcraft/Dockerfile

Normal file

@@ -0,0 +1,7 @@

|

|||||||

|

FROM snapcraft/xenial-amd64

|

||||||

|

|

||||||

|

# Update snapcraft to latest version

|

||||||

|

RUN apt-get update -qq \

|

||||||

|

&& apt-get install -y snapcraft \

|

||||||

|

&& rm -rf /var/lib/apt/lists/* \

|

||||||

|

&& snapcraft --version

|

||||||

6

ci/docker-snapcraft/build.sh

Executable file

6

ci/docker-snapcraft/build.sh

Executable file

@@ -0,0 +1,6 @@

|

|||||||

|

#!/bin/bash -ex

|

||||||

|

|

||||||

|

cd "$(dirname "$0")"

|

||||||

|

|

||||||

|

docker build -t solanalabs/snapcraft .

|

||||||

|

docker push solanalabs/snapcraft

|

||||||

57

ci/hoover.sh

Executable file

57

ci/hoover.sh

Executable file

@@ -0,0 +1,57 @@

|

|||||||

|

#!/bin/bash

|

||||||

|

#

|

||||||

|

# Regular maintenance performed on a buildkite agent to control disk usage

|

||||||

|

#

|

||||||

|

|

||||||

|

echo --- Delete all exited containers first

|

||||||

|

(

|

||||||

|

set -x

|

||||||

|

exited=$(docker ps -aq --no-trunc --filter "status=exited")

|

||||||

|

if [[ -n "$exited" ]]; then

|

||||||

|

# shellcheck disable=SC2086 # Don't want to double quote "$exited"

|

||||||

|

docker rm $exited

|

||||||

|

fi

|

||||||

|

)

|

||||||

|

|

||||||

|

echo --- Delete untagged images

|

||||||

|

(

|

||||||

|

set -x

|

||||||

|

untagged=$(docker images | grep '<none>'| awk '{ print $3 }')

|

||||||

|

if [[ -n "$untagged" ]]; then

|

||||||

|

# shellcheck disable=SC2086 # Don't want to double quote "$untagged"

|

||||||

|

docker rmi $untagged

|

||||||

|

fi

|

||||||

|

)

|

||||||

|

|

||||||

|

echo --- Delete all dangling images

|

||||||

|

(

|

||||||

|

set -x

|

||||||

|

dangling=$(docker images --filter 'dangling=true' -q --no-trunc | sort | uniq)

|

||||||

|

if [[ -n "$dangling" ]]; then

|

||||||

|

# shellcheck disable=SC2086 # Don't want to double quote "$dangling"

|

||||||

|

docker rmi $dangling

|

||||||

|

fi

|

||||||

|

)

|

||||||

|

|

||||||

|

echo --- Remove unused docker networks

|

||||||

|

(

|

||||||

|

set -x

|

||||||

|

docker network prune -f

|

||||||

|

)

|

||||||

|

|

||||||

|

echo "--- Delete /tmp files older than 1 day owned by $(whoami)"

|

||||||

|

(

|

||||||

|

set -x

|

||||||

|

find /tmp -maxdepth 1 -user "$(whoami)" -mtime +1 -print0 | xargs -0 rm -rf

|

||||||

|

)

|

||||||

|

|

||||||

|

echo --- System Status

|

||||||

|

(

|

||||||

|

set -x

|

||||||

|

docker images

|

||||||

|

docker ps

|

||||||

|

docker network ls

|

||||||

|

df -h

|

||||||

|

)

|

||||||

|

|

||||||

|

exit 0

|

||||||

@@ -1,6 +1,6 @@

|

|||||||

#!/bin/bash -e

|

#!/bin/bash -e

|

||||||

|

|

||||||

cd $(dirname $0)/..

|

cd "$(dirname "$0")/.."

|

||||||

|

|

||||||

if [[ -z "$BUILDKITE_TAG" ]]; then

|

if [[ -z "$BUILDKITE_TAG" ]]; then

|

||||||

# Skip publish if this is not a tagged release

|

# Skip publish if this is not a tagged release

|

||||||

@@ -12,8 +12,8 @@ if [[ -z "$CRATES_IO_TOKEN" ]]; then

|

|||||||

exit 1

|

exit 1

|

||||||

fi

|

fi

|

||||||

|

|

||||||

cargo package

|

|

||||||

# TODO: Ensure the published version matches the contents of BUILDKITE_TAG

|

# TODO: Ensure the published version matches the contents of BUILDKITE_TAG

|

||||||

cargo publish --token "$CRATES_IO_TOKEN"

|

ci/docker-run.sh rust \

|

||||||

|

bash -exc "cargo package; cargo publish --token $CRATES_IO_TOKEN"

|

||||||

|

|

||||||

exit 0

|

exit 0

|

||||||

19

ci/run-local.sh

Executable file

19

ci/run-local.sh

Executable file

@@ -0,0 +1,19 @@

|

|||||||

|

#!/bin/bash -e

|

||||||

|

#

|

||||||

|

# Run the entire buildkite CI pipeline locally for pre-testing before sending a

|

||||||

|

# Github pull request

|

||||||

|

#

|

||||||

|

|

||||||

|

cd "$(dirname "$0")/.."

|

||||||

|

BKRUN=ci/node_modules/.bin/bkrun

|

||||||

|

|

||||||

|

if [[ ! -x $BKRUN ]]; then

|

||||||

|

(

|

||||||

|

set -x

|

||||||

|

cd ci/

|

||||||

|

npm install bkrun

|

||||||

|

)

|

||||||

|

fi

|

||||||

|

|

||||||

|

set -x

|

||||||

|

exec ./ci/node_modules/.bin/bkrun ci/buildkite.yml

|

||||||

11

ci/shellcheck.sh

Executable file

11

ci/shellcheck.sh

Executable file

@@ -0,0 +1,11 @@

|

|||||||

|

#!/bin/bash -e

|

||||||

|

#

|

||||||

|

# Reference: https://github.com/koalaman/shellcheck/wiki/Directive

|

||||||

|

|

||||||

|

cd "$(dirname "$0")/.."

|

||||||

|

|

||||||

|

set -x

|

||||||

|

find . -name "*.sh" -not -regex ".*/.cargo/.*" -not -regex ".*/node_modules/.*" -print0 \

|

||||||

|

| xargs -0 \

|

||||||

|

ci/docker-run.sh koalaman/shellcheck --color=always --external-sources --shell=bash

|

||||||

|

exit 0

|

||||||

40

ci/snap.sh

Executable file

40

ci/snap.sh

Executable file

@@ -0,0 +1,40 @@

|

|||||||

|

#!/bin/bash -e

|

||||||

|

|

||||||

|

cd "$(dirname "$0")/.."

|

||||||

|

|

||||||

|

DRYRUN=

|

||||||

|

if [[ -z $BUILDKITE_BRANCH || $BUILDKITE_BRANCH =~ pull/* ]]; then

|

||||||

|

DRYRUN="echo"

|

||||||

|

fi

|

||||||

|

|

||||||

|

if [[ -z "$BUILDKITE_TAG" ]]; then

|

||||||

|

SNAP_CHANNEL=edge

|

||||||

|

else

|

||||||

|

SNAP_CHANNEL=beta

|

||||||

|

fi

|

||||||

|

|

||||||

|

if [[ -z $DRYRUN ]]; then

|

||||||

|

[[ -n $SNAPCRAFT_CREDENTIALS_KEY ]] || {

|

||||||

|

echo SNAPCRAFT_CREDENTIALS_KEY not defined

|

||||||

|

exit 1;

|

||||||

|

}

|

||||||

|

(

|

||||||

|

openssl aes-256-cbc -d \

|

||||||

|

-in ci/snapcraft.credentials.enc \

|

||||||

|

-out ci/snapcraft.credentials \

|

||||||

|

-k "$SNAPCRAFT_CREDENTIALS_KEY"

|

||||||

|

|

||||||

|

snapcraft login --with ci/snapcraft.credentials

|

||||||

|

) || {

|

||||||

|

rm -f ci/snapcraft.credentials;

|

||||||

|

exit 1

|

||||||

|

}

|

||||||

|

fi

|

||||||

|

|

||||||

|

set -x

|

||||||

|

|

||||||

|

echo --- build

|

||||||

|

snapcraft

|

||||||

|

|

||||||

|

echo --- publish

|

||||||

|

$DRYRUN snapcraft push solana_*.snap --release $SNAP_CHANNEL

|

||||||

BIN

ci/snapcraft.credentials.enc

Normal file

BIN

ci/snapcraft.credentials.enc

Normal file

Binary file not shown.

@@ -1,17 +0,0 @@

|

|||||||

#!/bin/bash -e

|

|

||||||

|

|

||||||

cd $(dirname $0)/..

|

|

||||||

|

|

||||||

if [[ -z "$libcuda_verify_ed25519_URL" ]]; then

|

|

||||||

echo libcuda_verify_ed25519_URL undefined

|

|

||||||

exit 1

|

|

||||||

fi

|

|

||||||

|

|

||||||

export LD_LIBRARY_PATH=/usr/local/cuda/lib64

|

|

||||||

export PATH=$PATH:/usr/local/cuda/bin

|

|

||||||

curl -X GET -o libcuda_verify_ed25519.a "$libcuda_verify_ed25519_URL"

|

|

||||||

|

|

||||||

source $HOME/.cargo/env

|

|

||||||

cargo test --features=cuda

|

|

||||||

|

|

||||||

exit 0

|

|

||||||

@@ -1,9 +0,0 @@

|

|||||||

#!/bin/bash -e

|

|

||||||

|

|

||||||

cd $(dirname $0)/..

|

|

||||||

|

|

||||||

rustc --version

|

|

||||||

cargo --version

|

|

||||||

|

|

||||||

export RUST_BACKTRACE=1

|

|

||||||

cargo test -- --ignored

|

|

||||||

@@ -1,13 +1,32 @@

|

|||||||

#!/bin/bash -e

|

#!/bin/bash -e

|

||||||

|

|

||||||

cd $(dirname $0)/..

|

cd "$(dirname "$0")/.."

|

||||||

|

|

||||||

|

export RUST_BACKTRACE=1

|

||||||

rustc --version

|

rustc --version

|

||||||

cargo --version

|

cargo --version

|

||||||

|

|

||||||

rustup component add rustfmt-preview

|

_() {

|

||||||

cargo fmt -- --write-mode=diff

|

echo "--- $*"

|

||||||

cargo build --verbose --features unstable

|

"$@"

|

||||||

cargo test --verbose --features unstable

|

}

|

||||||

|

|

||||||

|

_ cargo build --verbose --features unstable

|

||||||

|

_ cargo test --verbose --features unstable

|

||||||

|

_ cargo bench --verbose --features unstable

|

||||||

|

|

||||||

|

|

||||||

|

# Coverage ...

|

||||||

|

_ cargo install --force cargo-cov

|

||||||

|

_ cargo cov test

|

||||||

|

_ cargo cov report

|

||||||

|

|

||||||

|

echo --- Coverage report:

|

||||||

|

ls -l target/cov/report/index.html

|

||||||

|

|

||||||

|

if [[ -z "$CODECOV_TOKEN" ]]; then

|

||||||

|

echo CODECOV_TOKEN undefined

|

||||||

|

else

|

||||||

|

bash <(curl -s https://codecov.io/bash) -x 'llvm-cov gcov'

|

||||||

|

fi

|

||||||

|

|

||||||

exit 0

|

|

||||||

|

|||||||

12

ci/test-stable-perf.sh

Executable file

12

ci/test-stable-perf.sh

Executable file

@@ -0,0 +1,12 @@

|

|||||||

|

#!/bin/bash -e

|

||||||

|

|

||||||

|

cd "$(dirname "$0")/.."

|

||||||

|

|

||||||

|

./fetch-perf-libs.sh

|

||||||

|

|

||||||

|

export LD_LIBRARY_PATH=$PWD:/usr/local/cuda/lib64

|

||||||

|

export PATH=$PATH:/usr/local/cuda/bin

|

||||||

|

export RUST_BACKTRACE=1

|

||||||

|

|

||||||

|

set -x

|

||||||

|

exec cargo test --features=cuda,erasure

|

||||||

@@ -1,13 +1,18 @@

|

|||||||

#!/bin/bash -e

|

#!/bin/bash -e

|

||||||

|

|

||||||

cd $(dirname $0)/..

|

cd "$(dirname "$0")/.."

|

||||||

|

|

||||||

|

export RUST_BACKTRACE=1

|

||||||

rustc --version

|

rustc --version

|

||||||

cargo --version

|

cargo --version

|

||||||

|

|

||||||

rustup component add rustfmt-preview

|

_() {

|

||||||

cargo fmt -- --write-mode=diff

|

echo "--- $*"

|

||||||

cargo build --verbose

|

"$@"

|

||||||

cargo test --verbose

|

}

|

||||||

|

|

||||||

exit 0

|

_ rustup component add rustfmt-preview

|

||||||

|

_ cargo fmt -- --write-mode=diff

|

||||||

|

_ cargo build --verbose

|

||||||

|

_ cargo test --verbose

|

||||||

|

_ cargo test -- --ignored

|

||||||

|

|||||||

@@ -1,65 +0,0 @@

|

|||||||

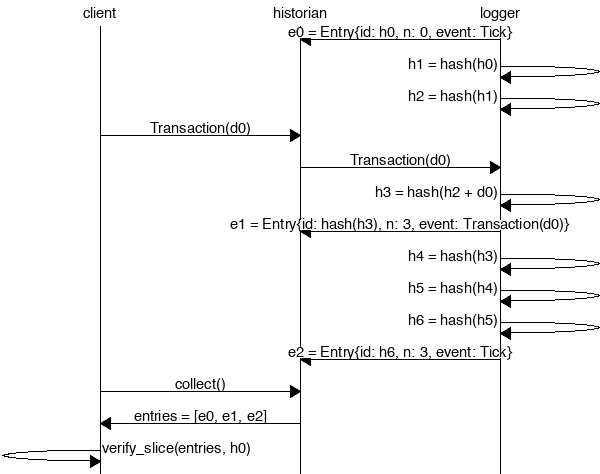

The Historian

|

|

||||||

===

|

|

||||||

|

|

||||||

Create a *Historian* and send it *events* to generate an *event log*, where each *entry*

|

|

||||||

is tagged with the historian's latest *hash*. Then ensure the order of events was not tampered

|

|

||||||

with by verifying each entry's hash can be generated from the hash in the previous entry:

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

```rust

|

|

||||||

extern crate solana;

|

|

||||||

|

|

||||||

use solana::historian::Historian;

|

|

||||||

use solana::ledger::{Block, Entry, Hash};

|

|

||||||

use solana::event::{generate_keypair, get_pubkey, sign_claim_data, Event};

|

|

||||||

use std::thread::sleep;

|

|

||||||

use std::time::Duration;

|

|

||||||

use std::sync::mpsc::SendError;

|

|

||||||

|

|

||||||

fn create_ledger(hist: &Historian<Hash>) -> Result<(), SendError<Event<Hash>>> {

|

|

||||||

sleep(Duration::from_millis(15));

|

|

||||||

let tokens = 42;

|

|

||||||

let keypair = generate_keypair();

|

|

||||||

let event0 = Event::new_claim(get_pubkey(&keypair), tokens, sign_claim_data(&tokens, &keypair));

|

|

||||||

hist.sender.send(event0)?;

|

|

||||||

sleep(Duration::from_millis(10));

|

|

||||||

Ok(())

|

|

||||||

}

|

|

||||||

|

|

||||||

fn main() {

|

|

||||||

let seed = Hash::default();

|

|

||||||

let hist = Historian::new(&seed, Some(10));

|

|

||||||

create_ledger(&hist).expect("send error");

|

|

||||||

drop(hist.sender);

|

|

||||||

let entries: Vec<Entry<Hash>> = hist.receiver.iter().collect();

|

|

||||||