Compare commits

455 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| e6c3c215ab | |||

| 5c66bbde01 | |||

| 6268d540a8 | |||

| 3cfb571356 | |||

| f6e5f2439d | |||

| edf6272374 | |||

| 7f6a4b0ce3 | |||

| 3be5f25f2f | |||

| 1b6cdd5637 | |||

| f752e55929 | |||

| ebb089b3f1 | |||

| ad6303f031 | |||

| 828b9d6717 | |||

| 444adcd1ca | |||

| 69ac305883 | |||

| 2ff57df2a0 | |||

| 7077f4cbe2 | |||

| 266f85f607 | |||

| d90ab90145 | |||

| 48018b3f5b | |||

| 15584e7062 | |||

| d415b17146 | |||

| 9ed953e8c3 | |||

| b60a98bd6e | |||

| a15e30d4b3 | |||

| d5d133353f | |||

| 6badc98510 | |||

| ea8bfb46ce | |||

| 58860ed19f | |||

| 583f652197 | |||

| 3215dcff78 | |||

| 38fdd17067 | |||

| 807ccd15ba | |||

| 1c923d2f9e | |||

| 2676b21400 | |||

| fd5ef94b5a | |||

| 02c7eea236 | |||

| 34d1805b54 | |||

| 753eaa8266 | |||

| 0b39c6f98e | |||

| 55b8d0db4d | |||

| 3d7969d8a2 | |||

| 041de8082a | |||

| 3da1fa4d88 | |||

| 39df21de30 | |||

| 8cbb7d7362 | |||

| 10a0c47210 | |||

| 89bf3765f3 | |||

| 8181bc591b | |||

| ca877e689c | |||

| c6048e2bab | |||

| 60015aee04 | |||

| 43e6741071 | |||

| b91f6bcbff | |||

| 64e2f1b949 | |||

| 13a2f05776 | |||

| 903374ae9b | |||

| d366a07403 | |||

| e94921174a | |||

| dea5ab2f79 | |||

| 5e11078f34 | |||

| d7670cd4ff | |||

| 29f3230089 | |||

| d003efb522 | |||

| 97e772e87a | |||

| 0b33615979 | |||

| 249cead13e | |||

| 7c96dea359 | |||

| 374c9921fd | |||

| fb55ab8c33 | |||

| 13485074ac | |||

| 4944c965e4 | |||

| 83c5b3bc38 | |||

| 7fc42de758 | |||

| 0a30bd74c1 | |||

| 9b12a79c8d | |||

| 0dcde23b05 | |||

| 8dc15b88eb | |||

| d20c952f92 | |||

| c2eeeb27fd | |||

| 180d8b67e4 | |||

| 9c989c46ee | |||

| 51633f509d | |||

| 705228ecc2 | |||

| 740f6d2258 | |||

| 3b9ef5ccab | |||

| ab74e7f24f | |||

| be9a670fb7 | |||

| 6e43e7a146 | |||

| ab2093926a | |||

| 916b90f415 | |||

| 2ef3db9fab | |||

| 6987b6fd58 | |||

| 078179e9b8 | |||

| 50ccecdff5 | |||

| e838a8c28a | |||

| e5f7eeedbf | |||

| d1948b5a00 | |||

| c07f700c53 | |||

| c934a30f66 | |||

| 310d01d8a2 | |||

| f330739bc7 | |||

| 58626721ad | |||

| 584c8c07b8 | |||

| a93ec03d2c | |||

| 7bd3a8e004 | |||

| 912a5f951e | |||

| 6869089111 | |||

| 6fd32fe850 | |||

| 81e2b36d38 | |||

| 7d811afab1 | |||

| 39f5aaab8b | |||

| 5fc81dd6c8 | |||

| 491a530d90 | |||

| c12da50f9b | |||

| 41e8500fc5 | |||

| a7f59ef3c1 | |||

| f4466c8c0a | |||

| bc6d6b20fa | |||

| 01326936e6 | |||

| c960e8d351 | |||

| fc69d31914 | |||

| 8d425e127b | |||

| 3cfb07ea38 | |||

| 76679ffb92 | |||

| dc2ec925d7 | |||

| 81d6ba3ec5 | |||

| 014bdaa355 | |||

| 0c60fdd2ce | |||

| 43d986d14e | |||

| 123d7c6a37 | |||

| 5ac7df17f9 | |||

| bc0dde696a | |||

| c323bd3c87 | |||

| 5c672adc21 | |||

| 2f80747dc7 | |||

| 95749ed0e3 | |||

| 94eea3abec | |||

| fe32159673 | |||

| 07aa2e1260 | |||

| 6fec8fad57 | |||

| 84df487f7d | |||

| 49708e92d3 | |||

| daadae7987 | |||

| 2b788d06b7 | |||

| 90cd9bd533 | |||

| d63506f98c | |||

| 17de6876bb | |||

| fc540395f9 | |||

| da2b4962a9 | |||

| 3abe305a21 | |||

| 46e8c09bd8 | |||

| e683c34a89 | |||

| 54e4f75081 | |||

| 9f256f0929 | |||

| ef169a6652 | |||

| eaec25f940 | |||

| 6a87d8975c | |||

| b8cf5f9427 | |||

| 2f1e585446 | |||

| f9309b46aa | |||

| 22f5985f1b | |||

| c59c38e50e | |||

| 232e1bb8a3 | |||

| 1fbb34620c | |||

| 89f5b803c9 | |||

| 55179101cd | |||

| 132495b1fc | |||

| a03d7bf5cd | |||

| 3bf225e85f | |||

| cc2bb290c4 | |||

| 878ca8c5c5 | |||

| 4bc41d81ee | |||

| f6ca176fc8 | |||

| 0bec360a31 | |||

| 04f30710c5 | |||

| 98c0a2af87 | |||

| 9db42c1769 | |||

| 849bced602 | |||

| 27f29019ef | |||

| 8642a41f2b | |||

| bf902ef5bc | |||

| 7656b55c22 | |||

| 7d3d4b9443 | |||

| 15c093c5e2 | |||

| 116166f62d | |||

| 26b19dde75 | |||

| c8ddc68f13 | |||

| 7c9681007c | |||

| 13206e4976 | |||

| 2f18302d32 | |||

| ddb21d151d | |||

| c64a9fb456 | |||

| ee19b4f86e | |||

| 14239e584f | |||

| 112aecf6eb | |||

| c1783d77d7 | |||

| f089abb3c5 | |||

| 8e551f5e32 | |||

| 290960c3b5 | |||

| 62af09adbe | |||

| e39c0b34e5 | |||

| 8ad90807ee | |||

| 533b3170a7 | |||

| 7732f3f5fb | |||

| f52f02a434 | |||

| 4d7d4d673e | |||

| 9a437f0d38 | |||

| c385f8bb6e | |||

| fa44be2a9d | |||

| 117ab0c141 | |||

| 7488d19ae6 | |||

| 60524ad5f2 | |||

| fad7ff8bf0 | |||

| 383d445ba1 | |||

| 803dcb0800 | |||

| fde320e2f2 | |||

| 8ea97141ea | |||

| 9f232bac58 | |||

| 8295cc11c0 | |||

| 70f80adb9a | |||

| 9a7cac1e07 | |||

| c584a25ec9 | |||

| bff32bf7bc | |||

| d0e7450389 | |||

| 4da89ac8a9 | |||

| f7032f7d9a | |||

| 7c7e3931a0 | |||

| 6be3d62d89 | |||

| 6f509a8a1e | |||

| 4379fabf16 | |||

| 6b66e1a077 | |||

| c11a3e0fdc | |||

| 3418033c55 | |||

| caa9a846ed | |||

| 8ee76bcea0 | |||

| 47325cbe01 | |||

| e0c8417297 | |||

| 9238ee9572 | |||

| 64af37e0cd | |||

| 9f9b79f30b | |||

| 265f41887f | |||

| 4f09e5d04c | |||

| 434f321336 | |||

| f4e0d1be58 | |||

| e5bae0604b | |||

| e7da083c31 | |||

| 367c32dabe | |||

| e054238af6 | |||

| e8faf6d59a | |||

| baa4ea3cd8 | |||

| 75ef0f0329 | |||

| 65185c0011 | |||

| eb94613d7d | |||

| 67f4f4fb49 | |||

| a7ecf4ac4c | |||

| 45765b625a | |||

| aa0a184ebe | |||

| 069f9f0d5d | |||

| c82b520ea8 | |||

| 9d6e5bde4a | |||

| 0eb3669fbf | |||

| 30449b6054 | |||

| f5f71a19b8 | |||

| 0135971769 | |||

| 8579795c40 | |||

| 9d77fd7eec | |||

| 8c40d1bd72 | |||

| 7a0bc7d888 | |||

| 1e07014f86 | |||

| 49281b24e5 | |||

| a8b1980de4 | |||

| b8cd5f0482 | |||

| cc9f0788aa | |||

| 209910299d | |||

| 17926ff5d9 | |||

| 957fb0667c | |||

| 8d17aed785 | |||

| 7ef8d5ddde | |||

| 9930a2e167 | |||

| a86be9ebf2 | |||

| ad6665c8b6 | |||

| 923162ae9d | |||

| dd2bd67049 | |||

| d500bbff04 | |||

| e759bd1a99 | |||

| 94daf4cea4 | |||

| 2379792e0a | |||

| dba6d7a8a6 | |||

| 086c206b76 | |||

| 5dd567deef | |||

| b6d8f737ca | |||

| 491ba9da84 | |||

| a420a9293f | |||

| c1bc5f6a07 | |||

| 9834c251d0 | |||

| 54340ed4c6 | |||

| 96a0a9202c | |||

| a4c081d3a1 | |||

| d1b6206858 | |||

| 0eb6849fe3 | |||

| b725fdb093 | |||

| 1436bb1ff2 | |||

| 5a44c36b1f | |||

| 5d990502cb | |||

| 64735da716 | |||

| 95b82aa6dc | |||

| f09952f3d7 | |||

| b98e04dc56 | |||

| cb436250da | |||

| 4376032e3a | |||

| c231331e05 | |||

| 624c151ca2 | |||

| 5d0356f74b | |||

| b019416518 | |||

| 4fcd9e3bd6 | |||

| 66bf889c39 | |||

| a2811842c8 | |||

| 1929601425 | |||

| 282afee47e | |||

| e701ccc949 | |||

| 6543497c17 | |||

| 7d9af5a937 | |||

| 720c54a5bb | |||

| 5dca3c41f2 | |||

| 929546f60b | |||

| cb0ce9986c | |||

| 064eba00fd | |||

| a4336a39d6 | |||

| 298989c4b9 | |||

| 48c28c2267 | |||

| d76ecbc9c9 | |||

| 79fb9c00aa | |||

| c9e03f37ce | |||

| aa5f1699a7 | |||

| e1e9126d03 | |||

| 672a4b3723 | |||

| 955f76baab | |||

| 7da8a5e2d1 | |||

| ff82fbf112 | |||

| 8503a0a58f | |||

| b1e9512f44 | |||

| 608def9c78 | |||

| bcb21bc1d8 | |||

| f63096620a | |||

| 9b26892bae | |||

| 572475ce14 | |||

| 876d7995e1 | |||

| b8655e30d4 | |||

| 7cf0d55546 | |||

| ce60b960c0 | |||

| cebcb5b92d | |||

| 11a0f96f5e | |||

| 74ebaf1744 | |||

| f7496ea6d1 | |||

| bebba7dc1f | |||

| afb2bf442c | |||

| c7de48c982 | |||

| f906112c03 | |||

| 8ef864fb39 | |||

| 1c9b5ab53c | |||

| c10faae3b5 | |||

| 2104dd5a0a | |||

| fbe64037db | |||

| d8c50b150c | |||

| 8871bb2d8e | |||

| a148454376 | |||

| be518b569b | |||

| c998fbe2ae | |||

| 9f12cd0c09 | |||

| 0d0fee1ca1 | |||

| a0410c4677 | |||

| 8fe464cfa3 | |||

| 3e2d6d9e8b | |||

| 32d677787b | |||

| dfd1c4eab3 | |||

| 36bb1f989d | |||

| 684f4c59e0 | |||

| 1b77e8a69a | |||

| 662e10c3e0 | |||

| c935fdb12f | |||

| 9e16937914 | |||

| f705202381 | |||

| f5532ad9f7 | |||

| 570e71f050 | |||

| c9cc4b4369 | |||

| 7111aa3b18 | |||

| 12eba4bcc7 | |||

| 4610de8fdd | |||

| 3fcc2dd944 | |||

| 8299bae2d4 | |||

| 604ccf7552 | |||

| f3dd47948a | |||

| c3bb207488 | |||

| 9009d1bfb3 | |||

| fa4d9e8bcb | |||

| 34b77efc87 | |||

| 5ca0ccbcd2 | |||

| 6aa4e52480 | |||

| f98e9a2ad7 | |||

| c6134cc25b | |||

| 0443b39264 | |||

| 8b0b8efbcb | |||

| 97449cee43 | |||

| ab5252c750 | |||

| 05a27cb34d | |||

| b02eab57d2 | |||

| b8d52cc3e4 | |||

| 7d9bab9508 | |||

| 944181a30e | |||

| d8dd50505a | |||

| d78082f5e4 | |||

| 08e501e57b | |||

| 29a607427d | |||

| afb830c91f | |||

| c1326ac3d5 | |||

| 513a1adf57 | |||

| 7871b38c80 | |||

| b34d2d7dee | |||

| d7dfa8c22d | |||

| 8df274f0af | |||

| 07c4ebb7f2 | |||

| 49605b257d | |||

| fa4e232d73 | |||

| bd84cf6586 | |||

| 6e37f70d55 | |||

| d97112d7f0 | |||

| e57bba17c1 | |||

| 959da300cc | |||

| ba90e43f72 | |||

| 6effd64ab0 | |||

| e18da7c7c1 | |||

| 0297edaf1f | |||

| b317d13b44 | |||

| bb22522e45 | |||

| 41053b6d0b | |||

| bd3fe5fac9 | |||

| 10a70a238b | |||

| 0bead4d410 | |||

| 4a7156de43 | |||

| d88d1b2a09 | |||

| a7186328e0 | |||

| 5e3c7816bd | |||

| a2fa60fa31 | |||

| ceb65c2669 | |||

| fd209ef1a9 | |||

| 471f036444 | |||

| 6ec0e5834c | |||

| 4c94754661 | |||

| 831e2cbdc9 | |||

| 3550f703c3 | |||

| ea1d57b461 | |||

| 49386309c8 | |||

| b7a95ab7cc | |||

| bf35b730de |

2

.codecov.yml

Normal file

2

.codecov.yml

Normal file

@ -0,0 +1,2 @@

|

||||

ignore:

|

||||

- "src/bin"

|

||||

1

.gitattributes

vendored

Normal file

1

.gitattributes

vendored

Normal file

@ -0,0 +1 @@

|

||||

*.a filter=lfs diff=lfs merge=lfs -text

|

||||

3

.gitignore

vendored

3

.gitignore

vendored

@ -1,4 +1,3 @@

|

||||

|

||||

Cargo.lock

|

||||

/target/

|

||||

**/*.rs.bk

|

||||

Cargo.lock

|

||||

|

||||

65

Cargo.toml

65

Cargo.toml

@ -1,19 +1,70 @@

|

||||

[package]

|

||||

name = "silk"

|

||||

description = "A silky smooth implementation of the Loom architecture"

|

||||

version = "0.1.1"

|

||||

name = "solana"

|

||||

description = "High Performance Blockchain"

|

||||

version = "0.5.0-beta"

|

||||

documentation = "https://docs.rs/solana"

|

||||

homepage = "http://solana.io/"

|

||||

repository = "https://github.com/solana-labs/solana"

|

||||

authors = [

|

||||

"Anatoly Yakovenko <aeyakovenko@gmail.com>",

|

||||

"Greg Fitzgerald <garious@gmail.com>",

|

||||

"Anatoly Yakovenko <anatoly@solana.io>",

|

||||

"Greg Fitzgerald <greg@solana.io>",

|

||||

"Stephen Akridge <stephen@solana.io>",

|

||||

]

|

||||

license = "Apache-2.0"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-historian-demo"

|

||||

path = "src/bin/historian-demo.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-client-demo"

|

||||

path = "src/bin/client-demo.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-testnode"

|

||||

path = "src/bin/testnode.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-genesis"

|

||||

path = "src/bin/genesis.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-genesis-demo"

|

||||

path = "src/bin/genesis-demo.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-mint"

|

||||

path = "src/bin/mint.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-mint-demo"

|

||||

path = "src/bin/mint-demo.rs"

|

||||

|

||||

[badges]

|

||||

codecov = { repository = "loomprotocol/silk", branch = "master", service = "github" }

|

||||

codecov = { repository = "solana-labs/solana", branch = "master", service = "github" }

|

||||

|

||||

[features]

|

||||

unstable = []

|

||||

ipv6 = []

|

||||

cuda = []

|

||||

erasure = []

|

||||

|

||||

[dependencies]

|

||||

rayon = "1.0.0"

|

||||

itertools = "0.7.6"

|

||||

sha2 = "0.7.0"

|

||||

generic-array = { version = "0.9.0", default-features = false, features = ["serde"] }

|

||||

serde = "1.0.27"

|

||||

serde_derive = "1.0.27"

|

||||

serde_json = "1.0.10"

|

||||

ring = "0.12.1"

|

||||

untrusted = "0.5.1"

|

||||

bincode = "1.0.0"

|

||||

chrono = { version = "0.4.0", features = ["serde"] }

|

||||

log = "^0.4.1"

|

||||

env_logger = "^0.4.1"

|

||||

matches = "^0.1.6"

|

||||

byteorder = "^1.2.1"

|

||||

libc = "^0.2.1"

|

||||

getopts = "^0.2"

|

||||

isatty = "0.1"

|

||||

futures = "0.1"

|

||||

|

||||

2

LICENSE

2

LICENSE

@ -1,4 +1,4 @@

|

||||

Copyright 2018 Anatoly Yakovenko <anatoly@loomprotocol.com> and Greg Fitzgerald <garious@gmail.com>

|

||||

Copyright 2018 Anatoly Yakovenko, Greg Fitzgerald and Stephen Akridge

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

|

||||

140

README.md

140

README.md

@ -1,22 +1,99 @@

|

||||

[](https://crates.io/crates/silk)

|

||||

[](https://docs.rs/silk)

|

||||

[](https://travis-ci.org/loomprotocol/silk)

|

||||

[](https://codecov.io/gh/loomprotocol/silk)

|

||||

[](https://crates.io/crates/solana)

|

||||

[](https://docs.rs/solana)

|

||||

[](https://travis-ci.org/solana-labs/solana)

|

||||

[](https://codecov.io/gh/solana-labs/solana)

|

||||

|

||||

# Silk, A Silky Smooth Implementation of the Loom Architecture

|

||||

Disclaimer

|

||||

===

|

||||

|

||||

Loom is a new achitecture for a high performance blockchain. Its whitepaper boasts a theoretical

|

||||

throughput of 710k transactions per second on a 1 gbps network. The first implementation of the

|

||||

whitepaper is happening in the 'loomprotocol/loom' repository. That repo is aggressively moving

|

||||

forward, looking to de-risk technical claims as quickly as possible. This repo is quite a bit

|

||||

different philosophically. Here we assume the Loom architecture is sound and worthy of building

|

||||

a community around. We care a great deal about quality, clarity and short learning curve. We

|

||||

avoid the use of `unsafe` Rust and an write tests for *everything*. Optimizations are only

|

||||

added when corresponding benchmarks are also added that demonstrate real performance boots. We

|

||||

expect the feature set here will always be a long ways behind the loom repo, but that this is

|

||||

an implementation you can take to the bank, literally.

|

||||

All claims, content, designs, algorithms, estimates, roadmaps, specifications, and performance measurements described in this project are done with the author's best effort. It is up to the reader to check and validate their accuracy and truthfulness. Furthermore nothing in this project constitutes a solicitation for investment.

|

||||

|

||||

# Developing

|

||||

Solana: High Performance Blockchain

|

||||

===

|

||||

|

||||

Solana™ is a new architecture for a high performance blockchain. It aims to support

|

||||

over 700 thousand transactions per second on a gigabit network.

|

||||

|

||||

Introduction

|

||||

===

|

||||

|

||||

It's possible for a centralized database to process 710,000 transactions per second on a standard gigabit network if the transactions are, on average, no more than 178 bytes. A centralized database can also replicate itself and maintain high availability without significantly compromising that transaction rate using the distributed system technique known as Optimistic Concurrency Control [H.T.Kung, J.T.Robinson (1981)]. At Solana, we're demonstrating that these same theoretical limits apply just as well to blockchain on an adversarial network. The key ingredient? Finding a way to share time when nodes can't trust one-another. Once nodes can trust time, suddenly ~40 years of distributed systems research becomes applicable to blockchain! Furthermore, and much to our surprise, it can implemented using a mechanism that has existed in Bitcoin since day one. The Bitcoin feature is called nLocktime and it can be used to postdate transactions using block height instead of a timestamp. As a Bitcoin client, you'd use block height instead of a timestamp if you don't trust the network. Block height turns out to be an instance of what's being called a Verifiable Delay Function in cryptography circles. It's a cryptographically secure way to say time has passed. In Solana, we use a far more granular verifiable delay function, a SHA 256 hash chain, to checkpoint the ledger and coordinate consensus. With it, we implement Optimistic Concurrency Control and are now well in route towards that theoretical limit of 710,000 transactions per second.

|

||||

|

||||

Running the demo

|

||||

===

|

||||

|

||||

First, install Rust's package manager Cargo.

|

||||

|

||||

```bash

|

||||

$ curl https://sh.rustup.rs -sSf | sh

|

||||

$ source $HOME/.cargo/env

|

||||

```

|

||||

|

||||

If you plan to run with GPU optimizations enabled (not recommended), you'll need a CUDA library stored in git LFS. Install git-lfs here:

|

||||

|

||||

https://git-lfs.github.com/

|

||||

|

||||

Now checkout the code from github:

|

||||

|

||||

```bash

|

||||

$ git clone https://github.com/solana-labs/solana.git

|

||||

$ cd solana

|

||||

```

|

||||

|

||||

The testnode server is initialized with a ledger from stdin and

|

||||

generates new ledger entries on stdout. To create the input ledger, we'll need

|

||||

to create *the mint* and use it to generate a *genesis ledger*. It's done in

|

||||

two steps because the mint-demo.json file contains private keys that will be

|

||||

used later in this demo.

|

||||

|

||||

```bash

|

||||

$ echo 1000000000 | cargo run --release --bin solana-mint-demo > mint-demo.json

|

||||

$ cat mint-demo.json | cargo run --release --bin solana-genesis-demo > genesis.log

|

||||

```

|

||||

|

||||

Now you can start the server:

|

||||

|

||||

```bash

|

||||

$ cat genesis.log | cargo run --release --bin solana-testnode > transactions0.log

|

||||

```

|

||||

|

||||

Wait a few seconds for the server to initialize. It will print "Ready." when it's safe

|

||||

to start sending it transactions.

|

||||

|

||||

Then, in a separate shell, let's execute some transactions. Note we pass in

|

||||

the JSON configuration file here, not the genesis ledger.

|

||||

|

||||

```bash

|

||||

$ cat mint-demo.json | cargo run --release --bin solana-client-demo

|

||||

```

|

||||

|

||||

Now kill the server with Ctrl-C, and take a look at the ledger. You should

|

||||

see something similar to:

|

||||

|

||||

```json

|

||||

{"num_hashes":27,"id":[0, "..."],"event":"Tick"}

|

||||

{"num_hashes":3,"id":[67, "..."],"event":{"Transaction":{"tokens":42}}}

|

||||

{"num_hashes":27,"id":[0, "..."],"event":"Tick"}

|

||||

```

|

||||

|

||||

Now restart the server from where we left off. Pass it both the genesis ledger, and

|

||||

the transaction ledger.

|

||||

|

||||

```bash

|

||||

$ cat genesis.log transactions0.log | cargo run --release --bin solana-testnode > transactions1.log

|

||||

```

|

||||

|

||||

Lastly, run the client demo again, and verify that all funds were spent in the

|

||||

previous round, and so no additional transactions are added.

|

||||

|

||||

```bash

|

||||

$ cat mint-demo.json | cargo run --release --bin solana-client-demo

|

||||

```

|

||||

|

||||

Stop the server again, and verify there are only Tick entries, and no Transaction entries.

|

||||

|

||||

Developing

|

||||

===

|

||||

|

||||

Building

|

||||

---

|

||||

@ -32,8 +109,8 @@ $ rustup component add rustfmt-preview

|

||||

Download the source code:

|

||||

|

||||

```bash

|

||||

$ git clone https://github.com/loomprotocol/silk.git

|

||||

$ cd silk

|

||||

$ git clone https://github.com/solana-labs/solana.git

|

||||

$ cd solana

|

||||

```

|

||||

|

||||

Testing

|

||||

@ -59,3 +136,30 @@ Run the benchmarks:

|

||||

```bash

|

||||

$ cargo +nightly bench --features="unstable"

|

||||

```

|

||||

|

||||

To run the benchmarks on Linux with GPU optimizations enabled:

|

||||

|

||||

```bash

|

||||

$ cargo +nightly bench --features="unstable,cuda"

|

||||

```

|

||||

|

||||

Code coverage

|

||||

---

|

||||

|

||||

To generate code coverage statistics, run kcov via Docker:

|

||||

|

||||

```bash

|

||||

$ docker run -it --rm --security-opt seccomp=unconfined --volume "$PWD:/volume" elmtai/docker-rust-kcov

|

||||

```

|

||||

|

||||

Why coverage? While most see coverage as a code quality metric, we see it primarily as a developer

|

||||

productivity metric. When a developer makes a change to the codebase, presumably it's a *solution* to

|

||||

some problem. Our unit-test suite is how we encode the set of *problems* the codebase solves. Running

|

||||

the test suite should indicate that your change didn't *infringe* on anyone else's solutions. Adding a

|

||||

test *protects* your solution from future changes. Say you don't understand why a line of code exists,

|

||||

try deleting it and running the unit-tests. The nearest test failure should tell you what problem

|

||||

was solved by that code. If no test fails, go ahead and submit a Pull Request that asks, "what

|

||||

problem is solved by this code?" On the other hand, if a test does fail and you can think of a

|

||||

better way to solve the same problem, a Pull Request with your solution would most certainly be

|

||||

welcome! Likewise, if rewriting a test can better communicate what code it's protecting, please

|

||||

send us that patch!

|

||||

|

||||

15

build.rs

Normal file

15

build.rs

Normal file

@ -0,0 +1,15 @@

|

||||

use std::env;

|

||||

|

||||

fn main() {

|

||||

println!("cargo:rustc-link-search=native=.");

|

||||

if !env::var("CARGO_FEATURE_CUDA").is_err() {

|

||||

println!("cargo:rustc-link-lib=static=cuda_verify_ed25519");

|

||||

println!("cargo:rustc-link-search=native=/usr/local/cuda/lib64");

|

||||

println!("cargo:rustc-link-lib=dylib=cudart");

|

||||

println!("cargo:rustc-link-lib=dylib=cuda");

|

||||

println!("cargo:rustc-link-lib=dylib=cudadevrt");

|

||||

}

|

||||

if !env::var("CARGO_FEATURE_ERASURE").is_err() {

|

||||

println!("cargo:rustc-link-lib=dylib=Jerasure");

|

||||

}

|

||||

}

|

||||

15

doc/consensus.msc

Normal file

15

doc/consensus.msc

Normal file

@ -0,0 +1,15 @@

|

||||

msc {

|

||||

client,leader,verifier_a,verifier_b,verifier_c;

|

||||

|

||||

client=>leader [ label = "SUBMIT" ] ;

|

||||

leader=>client [ label = "CONFIRMED" ] ;

|

||||

leader=>verifier_a [ label = "CONFIRMED" ] ;

|

||||

leader=>verifier_b [ label = "CONFIRMED" ] ;

|

||||

leader=>verifier_c [ label = "CONFIRMED" ] ;

|

||||

verifier_a=>leader [ label = "VERIFIED" ] ;

|

||||

verifier_b=>leader [ label = "VERIFIED" ] ;

|

||||

leader=>client [ label = "FINALIZED" ] ;

|

||||

leader=>verifier_a [ label = "FINALIZED" ] ;

|

||||

leader=>verifier_b [ label = "FINALIZED" ] ;

|

||||

leader=>verifier_c [ label = "FINALIZED" ] ;

|

||||

}

|

||||

65

doc/historian.md

Normal file

65

doc/historian.md

Normal file

@ -0,0 +1,65 @@

|

||||

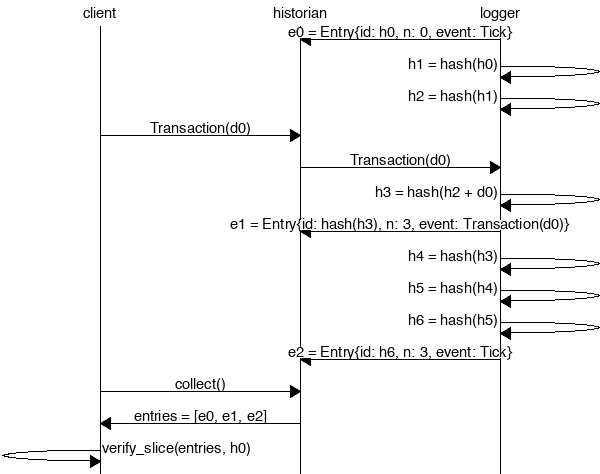

The Historian

|

||||

===

|

||||

|

||||

Create a *Historian* and send it *events* to generate an *event log*, where each *entry*

|

||||

is tagged with the historian's latest *hash*. Then ensure the order of events was not tampered

|

||||

with by verifying each entry's hash can be generated from the hash in the previous entry:

|

||||

|

||||

|

||||

|

||||

```rust

|

||||

extern crate solana;

|

||||

|

||||

use solana::historian::Historian;

|

||||

use solana::ledger::{Block, Entry, Hash};

|

||||

use solana::event::{generate_keypair, get_pubkey, sign_claim_data, Event};

|

||||

use std::thread::sleep;

|

||||

use std::time::Duration;

|

||||

use std::sync::mpsc::SendError;

|

||||

|

||||

fn create_ledger(hist: &Historian<Hash>) -> Result<(), SendError<Event<Hash>>> {

|

||||

sleep(Duration::from_millis(15));

|

||||

let tokens = 42;

|

||||

let keypair = generate_keypair();

|

||||

let event0 = Event::new_claim(get_pubkey(&keypair), tokens, sign_claim_data(&tokens, &keypair));

|

||||

hist.sender.send(event0)?;

|

||||

sleep(Duration::from_millis(10));

|

||||

Ok(())

|

||||

}

|

||||

|

||||

fn main() {

|

||||

let seed = Hash::default();

|

||||

let hist = Historian::new(&seed, Some(10));

|

||||

create_ledger(&hist).expect("send error");

|

||||

drop(hist.sender);

|

||||

let entries: Vec<Entry<Hash>> = hist.receiver.iter().collect();

|

||||

for entry in &entries {

|

||||

println!("{:?}", entry);

|

||||

}

|

||||

// Proof-of-History: Verify the historian learned about the events

|

||||

// in the same order they appear in the vector.

|

||||

assert!(entries[..].verify(&seed));

|

||||

}

|

||||

```

|

||||

|

||||

Running the program should produce a ledger similar to:

|

||||

|

||||

```rust

|

||||

Entry { num_hashes: 0, id: [0, ...], event: Tick }

|

||||

Entry { num_hashes: 3, id: [67, ...], event: Transaction { tokens: 42 } }

|

||||

Entry { num_hashes: 3, id: [123, ...], event: Tick }

|

||||

```

|

||||

|

||||

Proof-of-History

|

||||

---

|

||||

|

||||

Take note of the last line:

|

||||

|

||||

```rust

|

||||

assert!(entries[..].verify(&seed));

|

||||

```

|

||||

|

||||

[It's a proof!](https://en.wikipedia.org/wiki/Curry–Howard_correspondence) For each entry returned by the

|

||||

historian, we can verify that `id` is the result of applying a sha256 hash to the previous `id`

|

||||

exactly `num_hashes` times, and then hashing then event data on top of that. Because the event data is

|

||||

included in the hash, the events cannot be reordered without regenerating all the hashes.

|

||||

18

doc/historian.msc

Normal file

18

doc/historian.msc

Normal file

@ -0,0 +1,18 @@

|

||||

msc {

|

||||

client,historian,recorder;

|

||||

|

||||

recorder=>historian [ label = "e0 = Entry{id: h0, n: 0, event: Tick}" ] ;

|

||||

recorder=>recorder [ label = "h1 = hash(h0)" ] ;

|

||||

recorder=>recorder [ label = "h2 = hash(h1)" ] ;

|

||||

client=>historian [ label = "Transaction(d0)" ] ;

|

||||

historian=>recorder [ label = "Transaction(d0)" ] ;

|

||||

recorder=>recorder [ label = "h3 = hash(h2 + d0)" ] ;

|

||||

recorder=>historian [ label = "e1 = Entry{id: hash(h3), n: 3, event: Transaction(d0)}" ] ;

|

||||

recorder=>recorder [ label = "h4 = hash(h3)" ] ;

|

||||

recorder=>recorder [ label = "h5 = hash(h4)" ] ;

|

||||

recorder=>recorder [ label = "h6 = hash(h5)" ] ;

|

||||

recorder=>historian [ label = "e2 = Entry{id: h6, n: 3, event: Tick}" ] ;

|

||||

client=>historian [ label = "collect()" ] ;

|

||||

historian=>client [ label = "entries = [e0, e1, e2]" ] ;

|

||||

client=>client [ label = "entries.verify(h0)" ] ;

|

||||

}

|

||||

526

src/accountant.rs

Normal file

526

src/accountant.rs

Normal file

@ -0,0 +1,526 @@

|

||||

//! The `accountant` module tracks client balances, and the progress of pending

|

||||

//! transactions. It offers a high-level public API that signs transactions

|

||||

//! on behalf of the caller, and a private low-level API for when they have

|

||||

//! already been signed and verified.

|

||||

|

||||

extern crate libc;

|

||||

|

||||

use chrono::prelude::*;

|

||||

use event::Event;

|

||||

use hash::Hash;

|

||||

use mint::Mint;

|

||||

use plan::{Payment, Plan, Witness};

|

||||

use rayon::prelude::*;

|

||||

use signature::{KeyPair, PublicKey, Signature};

|

||||

use std::collections::hash_map::Entry::Occupied;

|

||||

use std::collections::{HashMap, HashSet, VecDeque};

|

||||

use std::result;

|

||||

use std::sync::RwLock;

|

||||

use transaction::Transaction;

|

||||

|

||||

pub const MAX_ENTRY_IDS: usize = 1024 * 4;

|

||||

|

||||

#[derive(Debug, PartialEq, Eq)]

|

||||

pub enum AccountingError {

|

||||

AccountNotFound,

|

||||

InsufficientFunds,

|

||||

InvalidTransferSignature,

|

||||

}

|

||||

|

||||

pub type Result<T> = result::Result<T, AccountingError>;

|

||||

|

||||

/// Commit funds to the 'to' party.

|

||||

fn apply_payment(balances: &RwLock<HashMap<PublicKey, RwLock<i64>>>, payment: &Payment) {

|

||||

if balances.read().unwrap().contains_key(&payment.to) {

|

||||

let bals = balances.read().unwrap();

|

||||

*bals[&payment.to].write().unwrap() += payment.tokens;

|

||||

} else {

|

||||

let mut bals = balances.write().unwrap();

|

||||

bals.insert(payment.to, RwLock::new(payment.tokens));

|

||||

}

|

||||

}

|

||||

|

||||

pub struct Accountant {

|

||||

balances: RwLock<HashMap<PublicKey, RwLock<i64>>>,

|

||||

pending: RwLock<HashMap<Signature, Plan>>,

|

||||

last_ids: RwLock<VecDeque<(Hash, RwLock<HashSet<Signature>>)>>,

|

||||

time_sources: RwLock<HashSet<PublicKey>>,

|

||||

last_time: RwLock<DateTime<Utc>>,

|

||||

}

|

||||

|

||||

impl Accountant {

|

||||

/// Create an Accountant using a deposit.

|

||||

pub fn new_from_deposit(deposit: &Payment) -> Self {

|

||||

let balances = RwLock::new(HashMap::new());

|

||||

apply_payment(&balances, deposit);

|

||||

Accountant {

|

||||

balances,

|

||||

pending: RwLock::new(HashMap::new()),

|

||||

last_ids: RwLock::new(VecDeque::new()),

|

||||

time_sources: RwLock::new(HashSet::new()),

|

||||

last_time: RwLock::new(Utc.timestamp(0, 0)),

|

||||

}

|

||||

}

|

||||

|

||||

/// Create an Accountant with only a Mint. Typically used by unit tests.

|

||||

pub fn new(mint: &Mint) -> Self {

|

||||

let deposit = Payment {

|

||||

to: mint.pubkey(),

|

||||

tokens: mint.tokens,

|

||||

};

|

||||

let acc = Self::new_from_deposit(&deposit);

|

||||

acc.register_entry_id(&mint.last_id());

|

||||

acc

|

||||

}

|

||||

|

||||

fn reserve_signature(signatures: &RwLock<HashSet<Signature>>, sig: &Signature) -> bool {

|

||||

if signatures.read().unwrap().contains(sig) {

|

||||

return false;

|

||||

}

|

||||

signatures.write().unwrap().insert(*sig);

|

||||

true

|

||||

}

|

||||

|

||||

fn forget_signature(signatures: &RwLock<HashSet<Signature>>, sig: &Signature) -> bool {

|

||||

signatures.write().unwrap().remove(sig)

|

||||

}

|

||||

|

||||

fn forget_signature_with_last_id(&self, sig: &Signature, last_id: &Hash) -> bool {

|

||||

if let Some(entry) = self.last_ids

|

||||

.read()

|

||||

.unwrap()

|

||||

.iter()

|

||||

.rev()

|

||||

.find(|x| x.0 == *last_id)

|

||||

{

|

||||

return Self::forget_signature(&entry.1, sig);

|

||||

}

|

||||

return false;

|

||||

}

|

||||

|

||||

fn reserve_signature_with_last_id(&self, sig: &Signature, last_id: &Hash) -> bool {

|

||||

if let Some(entry) = self.last_ids

|

||||

.read()

|

||||

.unwrap()

|

||||

.iter()

|

||||

.rev()

|

||||

.find(|x| x.0 == *last_id)

|

||||

{

|

||||

return Self::reserve_signature(&entry.1, sig);

|

||||

}

|

||||

false

|

||||

}

|

||||

|

||||

/// Tell the accountant which Entry IDs exist on the ledger. This function

|

||||

/// assumes subsequent calls correspond to later entries, and will boot

|

||||

/// the oldest ones once its internal cache is full. Once boot, the

|

||||

/// accountant will reject transactions using that `last_id`.

|

||||

pub fn register_entry_id(&self, last_id: &Hash) {

|

||||

let mut last_ids = self.last_ids.write().unwrap();

|

||||

if last_ids.len() >= MAX_ENTRY_IDS {

|

||||

last_ids.pop_front();

|

||||

}

|

||||

last_ids.push_back((*last_id, RwLock::new(HashSet::new())));

|

||||

}

|

||||

|

||||

/// Deduct tokens from the 'from' address the account has sufficient

|

||||

/// funds and isn't a duplicate.

|

||||

pub fn process_verified_transaction_debits(&self, tr: &Transaction) -> Result<()> {

|

||||

let bals = self.balances.read().unwrap();

|

||||

|

||||

// Hold a write lock before the condition check, so that a debit can't occur

|

||||

// between checking the balance and the withdraw.

|

||||

let option = bals.get(&tr.from);

|

||||

if option.is_none() {

|

||||

return Err(AccountingError::AccountNotFound);

|

||||

}

|

||||

let mut bal = option.unwrap().write().unwrap();

|

||||

|

||||

if !self.reserve_signature_with_last_id(&tr.sig, &tr.data.last_id) {

|

||||

return Err(AccountingError::InvalidTransferSignature);

|

||||

}

|

||||

|

||||

if *bal < tr.data.tokens {

|

||||

self.forget_signature_with_last_id(&tr.sig, &tr.data.last_id);

|

||||

return Err(AccountingError::InsufficientFunds);

|

||||

}

|

||||

|

||||

*bal -= tr.data.tokens;

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

pub fn process_verified_transaction_credits(&self, tr: &Transaction) {

|

||||

let mut plan = tr.data.plan.clone();

|

||||

plan.apply_witness(&Witness::Timestamp(*self.last_time.read().unwrap()));

|

||||

|

||||

if let Some(ref payment) = plan.final_payment() {

|

||||

apply_payment(&self.balances, payment);

|

||||

} else {

|

||||

let mut pending = self.pending.write().unwrap();

|

||||

pending.insert(tr.sig, plan);

|

||||

}

|

||||

}

|

||||

|

||||

/// Process a Transaction that has already been verified.

|

||||

pub fn process_verified_transaction(&self, tr: &Transaction) -> Result<()> {

|

||||

self.process_verified_transaction_debits(tr)?;

|

||||

self.process_verified_transaction_credits(tr);

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Process a batch of verified transactions.

|

||||

pub fn process_verified_transactions(&self, trs: Vec<Transaction>) -> Vec<Result<Transaction>> {

|

||||

// Run all debits first to filter out any transactions that can't be processed

|

||||

// in parallel deterministically.

|

||||

let results: Vec<_> = trs.into_par_iter()

|

||||

.map(|tr| self.process_verified_transaction_debits(&tr).map(|_| tr))

|

||||

.collect(); // Calling collect() here forces all debits to complete before moving on.

|

||||

|

||||

results

|

||||

.into_par_iter()

|

||||

.map(|result| {

|

||||

result.map(|tr| {

|

||||

self.process_verified_transaction_credits(&tr);

|

||||

tr

|

||||

})

|

||||

})

|

||||

.collect()

|

||||

}

|

||||

|

||||

fn partition_events(events: Vec<Event>) -> (Vec<Transaction>, Vec<Event>) {

|

||||

let mut trs = vec![];

|

||||

let mut rest = vec![];

|

||||

for event in events {

|

||||

match event {

|

||||

Event::Transaction(tr) => trs.push(tr),

|

||||

_ => rest.push(event),

|

||||

}

|

||||

}

|

||||

(trs, rest)

|

||||

}

|

||||

|

||||

pub fn process_verified_events(&self, events: Vec<Event>) -> Result<()> {

|

||||

let (trs, rest) = Self::partition_events(events);

|

||||

self.process_verified_transactions(trs);

|

||||

for event in rest {

|

||||

self.process_verified_event(&event)?;

|

||||

}

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Process a Witness Signature that has already been verified.

|

||||

fn process_verified_sig(&self, from: PublicKey, tx_sig: Signature) -> Result<()> {

|

||||

if let Occupied(mut e) = self.pending.write().unwrap().entry(tx_sig) {

|

||||

e.get_mut().apply_witness(&Witness::Signature(from));

|

||||

if let Some(ref payment) = e.get().final_payment() {

|

||||

apply_payment(&self.balances, payment);

|

||||

e.remove_entry();

|

||||

}

|

||||

};

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Process a Witness Timestamp that has already been verified.

|

||||

fn process_verified_timestamp(&self, from: PublicKey, dt: DateTime<Utc>) -> Result<()> {

|

||||

// If this is the first timestamp we've seen, it probably came from the genesis block,

|

||||

// so we'll trust it.

|

||||

if *self.last_time.read().unwrap() == Utc.timestamp(0, 0) {

|

||||

self.time_sources.write().unwrap().insert(from);

|

||||

}

|

||||

|

||||

if self.time_sources.read().unwrap().contains(&from) {

|

||||

if dt > *self.last_time.read().unwrap() {

|

||||

*self.last_time.write().unwrap() = dt;

|

||||

}

|

||||

} else {

|

||||

return Ok(());

|

||||

}

|

||||

|

||||

// Check to see if any timelocked transactions can be completed.

|

||||

let mut completed = vec![];

|

||||

|

||||

// Hold 'pending' write lock until the end of this function. Otherwise another thread can

|

||||

// double-spend if it enters before the modified plan is removed from 'pending'.

|

||||

let mut pending = self.pending.write().unwrap();

|

||||

for (key, plan) in pending.iter_mut() {

|

||||

plan.apply_witness(&Witness::Timestamp(*self.last_time.read().unwrap()));

|

||||

if let Some(ref payment) = plan.final_payment() {

|

||||

apply_payment(&self.balances, payment);

|

||||

completed.push(key.clone());

|

||||

}

|

||||

}

|

||||

|

||||

for key in completed {

|

||||

pending.remove(&key);

|

||||

}

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Process an Transaction or Witness that has already been verified.

|

||||

pub fn process_verified_event(&self, event: &Event) -> Result<()> {

|

||||

match *event {

|

||||

Event::Transaction(ref tr) => self.process_verified_transaction(tr),

|

||||

Event::Signature { from, tx_sig, .. } => self.process_verified_sig(from, tx_sig),

|

||||

Event::Timestamp { from, dt, .. } => self.process_verified_timestamp(from, dt),

|

||||

}

|

||||

}

|

||||

|

||||

/// Create, sign, and process a Transaction from `keypair` to `to` of

|

||||

/// `n` tokens where `last_id` is the last Entry ID observed by the client.

|

||||

pub fn transfer(

|

||||

&self,

|

||||

n: i64,

|

||||

keypair: &KeyPair,

|

||||

to: PublicKey,

|

||||

last_id: Hash,

|

||||

) -> Result<Signature> {

|

||||

let tr = Transaction::new(keypair, to, n, last_id);

|

||||

let sig = tr.sig;

|

||||

self.process_verified_transaction(&tr).map(|_| sig)

|

||||

}

|

||||

|

||||

/// Create, sign, and process a postdated Transaction from `keypair`

|

||||

/// to `to` of `n` tokens on `dt` where `last_id` is the last Entry ID

|

||||

/// observed by the client.

|

||||

pub fn transfer_on_date(

|

||||

&self,

|

||||

n: i64,

|

||||

keypair: &KeyPair,

|

||||

to: PublicKey,

|

||||

dt: DateTime<Utc>,

|

||||

last_id: Hash,

|

||||

) -> Result<Signature> {

|

||||

let tr = Transaction::new_on_date(keypair, to, dt, n, last_id);

|

||||

let sig = tr.sig;

|

||||

self.process_verified_transaction(&tr).map(|_| sig)

|

||||

}

|

||||

|

||||

pub fn get_balance(&self, pubkey: &PublicKey) -> Option<i64> {

|

||||

let bals = self.balances.read().unwrap();

|

||||

bals.get(pubkey).map(|x| *x.read().unwrap())

|

||||

}

|

||||

}

|

||||

|

||||

#[cfg(test)]

|

||||

mod tests {

|

||||

use super::*;

|

||||

use bincode::serialize;

|

||||

use hash::hash;

|

||||

use signature::KeyPairUtil;

|

||||

|

||||

#[test]

|

||||

fn test_accountant() {

|

||||

let alice = Mint::new(10_000);

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let acc = Accountant::new(&alice);

|

||||

acc.transfer(1_000, &alice.keypair(), bob_pubkey, alice.last_id())

|

||||

.unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap(), 1_000);

|

||||

|

||||

acc.transfer(500, &alice.keypair(), bob_pubkey, alice.last_id())

|

||||

.unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap(), 1_500);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_account_not_found() {

|

||||

let mint = Mint::new(1);

|

||||

let acc = Accountant::new(&mint);

|

||||

assert_eq!(

|

||||

acc.transfer(1, &KeyPair::new(), mint.pubkey(), mint.last_id()),

|

||||

Err(AccountingError::AccountNotFound)

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_invalid_transfer() {

|

||||

let alice = Mint::new(11_000);

|

||||

let acc = Accountant::new(&alice);

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

acc.transfer(1_000, &alice.keypair(), bob_pubkey, alice.last_id())

|

||||

.unwrap();

|

||||

assert_eq!(

|

||||

acc.transfer(10_001, &alice.keypair(), bob_pubkey, alice.last_id()),

|

||||

Err(AccountingError::InsufficientFunds)

|

||||

);

|

||||

|

||||

let alice_pubkey = alice.keypair().pubkey();

|

||||

assert_eq!(acc.get_balance(&alice_pubkey).unwrap(), 10_000);

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap(), 1_000);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_transfer_to_newb() {

|

||||

let alice = Mint::new(10_000);

|

||||

let acc = Accountant::new(&alice);

|

||||

let alice_keypair = alice.keypair();

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

acc.transfer(500, &alice_keypair, bob_pubkey, alice.last_id())

|

||||

.unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap(), 500);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_transfer_on_date() {

|

||||

let alice = Mint::new(1);

|

||||

let acc = Accountant::new(&alice);

|

||||

let alice_keypair = alice.keypair();

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let dt = Utc::now();

|

||||

acc.transfer_on_date(1, &alice_keypair, bob_pubkey, dt, alice.last_id())

|

||||

.unwrap();

|

||||

|

||||

// Alice's balance will be zero because all funds are locked up.

|

||||

assert_eq!(acc.get_balance(&alice.pubkey()), Some(0));

|

||||

|

||||

// Bob's balance will be None because the funds have not been

|

||||

// sent.

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), None);

|

||||

|

||||

// Now, acknowledge the time in the condition occurred and

|

||||

// that bob's funds are now available.

|

||||

acc.process_verified_timestamp(alice.pubkey(), dt).unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), Some(1));

|

||||

|

||||

acc.process_verified_timestamp(alice.pubkey(), dt).unwrap(); // <-- Attack! Attempt to process completed transaction.

|

||||

assert_ne!(acc.get_balance(&bob_pubkey), Some(2));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_transfer_after_date() {

|

||||

let alice = Mint::new(1);

|

||||

let acc = Accountant::new(&alice);

|

||||

let alice_keypair = alice.keypair();

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let dt = Utc::now();

|

||||

acc.process_verified_timestamp(alice.pubkey(), dt).unwrap();

|

||||

|

||||

// It's now past now, so this transfer should be processed immediately.

|

||||

acc.transfer_on_date(1, &alice_keypair, bob_pubkey, dt, alice.last_id())

|

||||

.unwrap();

|

||||

|

||||

assert_eq!(acc.get_balance(&alice.pubkey()), Some(0));

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), Some(1));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_cancel_transfer() {

|

||||

let alice = Mint::new(1);

|

||||

let acc = Accountant::new(&alice);

|

||||

let alice_keypair = alice.keypair();

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let dt = Utc::now();

|

||||

let sig = acc.transfer_on_date(1, &alice_keypair, bob_pubkey, dt, alice.last_id())

|

||||

.unwrap();

|

||||

|

||||

// Alice's balance will be zero because all funds are locked up.

|

||||

assert_eq!(acc.get_balance(&alice.pubkey()), Some(0));

|

||||

|

||||

// Bob's balance will be None because the funds have not been

|

||||

// sent.

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), None);

|

||||

|

||||

// Now, cancel the trancaction. Alice gets her funds back, Bob never sees them.

|

||||

acc.process_verified_sig(alice.pubkey(), sig).unwrap();

|

||||

assert_eq!(acc.get_balance(&alice.pubkey()), Some(1));

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), None);

|

||||

|

||||

acc.process_verified_sig(alice.pubkey(), sig).unwrap(); // <-- Attack! Attempt to cancel completed transaction.

|

||||

assert_ne!(acc.get_balance(&alice.pubkey()), Some(2));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_duplicate_event_signature() {

|

||||

let alice = Mint::new(1);

|

||||

let acc = Accountant::new(&alice);

|

||||

let sig = Signature::default();

|

||||

assert!(acc.reserve_signature_with_last_id(&sig, &alice.last_id()));

|

||||

assert!(!acc.reserve_signature_with_last_id(&sig, &alice.last_id()));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_forget_signature() {

|

||||

let alice = Mint::new(1);

|

||||

let acc = Accountant::new(&alice);

|

||||

let sig = Signature::default();

|

||||

acc.reserve_signature_with_last_id(&sig, &alice.last_id());

|

||||

assert!(acc.forget_signature_with_last_id(&sig, &alice.last_id()));

|

||||

assert!(!acc.forget_signature_with_last_id(&sig, &alice.last_id()));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_max_entry_ids() {

|

||||

let alice = Mint::new(1);

|

||||

let acc = Accountant::new(&alice);

|

||||

let sig = Signature::default();

|

||||

for i in 0..MAX_ENTRY_IDS {

|

||||

let last_id = hash(&serialize(&i).unwrap()); // Unique hash

|

||||

acc.register_entry_id(&last_id);

|

||||

}

|

||||

// Assert we're no longer able to use the oldest entry ID.

|

||||

assert!(!acc.reserve_signature_with_last_id(&sig, &alice.last_id()));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_debits_before_credits() {

|

||||

let mint = Mint::new(2);

|

||||

let acc = Accountant::new(&mint);

|

||||

let alice = KeyPair::new();

|

||||

let tr0 = Transaction::new(&mint.keypair(), alice.pubkey(), 2, mint.last_id());

|

||||

let tr1 = Transaction::new(&alice, mint.pubkey(), 1, mint.last_id());

|

||||

let trs = vec![tr0, tr1];

|

||||

assert!(acc.process_verified_transactions(trs)[1].is_err());

|

||||

}

|

||||

}

|

||||

|

||||

#[cfg(all(feature = "unstable", test))]

|

||||

mod bench {

|

||||

extern crate test;

|

||||

use self::test::Bencher;

|

||||

use accountant::*;

|

||||

use bincode::serialize;

|

||||

use hash::hash;

|

||||

use signature::KeyPairUtil;

|

||||

|

||||

#[bench]

|

||||

fn process_verified_event_bench(bencher: &mut Bencher) {

|

||||

let mint = Mint::new(100_000_000);

|

||||

let acc = Accountant::new(&mint);

|

||||

// Create transactions between unrelated parties.

|

||||

let transactions: Vec<_> = (0..4096)

|

||||

.into_par_iter()

|

||||

.map(|i| {

|

||||

// Seed the 'from' account.

|

||||

let rando0 = KeyPair::new();

|

||||

let tr = Transaction::new(&mint.keypair(), rando0.pubkey(), 1_000, mint.last_id());

|

||||

acc.process_verified_transaction(&tr).unwrap();

|

||||

|

||||

// Seed the 'to' account and a cell for its signature.

|

||||

let last_id = hash(&serialize(&i).unwrap()); // Unique hash

|

||||

acc.register_entry_id(&last_id);

|

||||

|

||||

let rando1 = KeyPair::new();

|

||||

let tr = Transaction::new(&rando0, rando1.pubkey(), 1, last_id);

|

||||

acc.process_verified_transaction(&tr).unwrap();

|

||||

|

||||

// Finally, return a transaction that's unique

|

||||

Transaction::new(&rando0, rando1.pubkey(), 1, last_id)

|

||||

})

|

||||

.collect();

|

||||

bencher.iter(|| {

|

||||

// Since benchmarker runs this multiple times, we need to clear the signatures.

|

||||

for sigs in acc.last_ids.read().unwrap().iter() {

|

||||

sigs.1.write().unwrap().clear();

|

||||

}

|

||||

|

||||

assert!(

|

||||

acc.process_verified_transactions(transactions.clone())

|

||||

.iter()

|

||||

.all(|x| x.is_ok())

|

||||

);

|

||||

});

|

||||

}

|

||||

}

|

||||

810

src/accountant_skel.rs

Normal file

810

src/accountant_skel.rs

Normal file

@ -0,0 +1,810 @@

|

||||

//! The `accountant_skel` module is a microservice that exposes the high-level

|

||||

//! Accountant API to the network. Its message encoding is currently

|

||||

//! in flux. Clients should use AccountantStub to interact with it.

|

||||

|

||||

use accountant::Accountant;

|

||||

use bincode::{deserialize, serialize};

|

||||

use ecdsa;

|

||||

use entry::Entry;

|

||||

use event::Event;

|

||||

use hash::Hash;

|

||||

use historian::Historian;

|

||||

use packet;

|

||||

use packet::SharedPackets;

|

||||

use rayon::prelude::*;

|

||||

use recorder::Signal;

|

||||

use result::Result;

|

||||

use serde_json;

|

||||

use signature::PublicKey;

|

||||

use std::cmp::max;

|

||||

use std::collections::VecDeque;

|

||||

use std::io::Write;

|

||||

use std::net::{SocketAddr, UdpSocket};

|

||||

use std::sync::atomic::{AtomicBool, Ordering};

|

||||

use std::sync::mpsc::{channel, Receiver, Sender};

|

||||

use std::sync::{Arc, Mutex, RwLock};

|

||||

use std::thread::{spawn, JoinHandle};

|

||||

use std::time::Duration;

|

||||

use streamer;

|

||||

use transaction::Transaction;

|

||||

|

||||

use subscribers;

|

||||

|

||||

pub struct AccountantSkel<W: Write + Send + 'static> {

|

||||

acc: Accountant,

|

||||

last_id: Hash,

|

||||

writer: W,

|

||||

historian: Historian,

|

||||

entry_info_subscribers: Vec<SocketAddr>,

|

||||

}

|

||||

|

||||

#[cfg_attr(feature = "cargo-clippy", allow(large_enum_variant))]

|

||||

#[derive(Serialize, Deserialize, Debug, Clone)]

|

||||

pub enum Request {

|

||||

Transaction(Transaction),

|

||||

GetBalance { key: PublicKey },

|

||||

GetLastId,

|

||||

Subscribe { subscriptions: Vec<Subscription> },

|

||||

}

|

||||

|

||||

#[derive(Serialize, Deserialize, Debug, Clone)]

|

||||

pub enum Subscription {

|

||||

EntryInfo,

|

||||

}

|

||||

|

||||

#[derive(Serialize, Deserialize, Debug, Clone)]

|

||||

pub struct EntryInfo {

|

||||

pub id: Hash,

|

||||

pub num_hashes: u64,

|

||||

pub num_events: u64,

|

||||

}

|

||||

|

||||

impl Request {

|

||||

/// Verify the request is valid.

|

||||

pub fn verify(&self) -> bool {

|

||||

match *self {

|

||||

Request::Transaction(ref tr) => tr.verify_plan(),

|

||||

_ => true,

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

#[derive(Serialize, Deserialize, Debug)]

|

||||

pub enum Response {

|

||||

Balance { key: PublicKey, val: Option<i64> },

|

||||

EntryInfo(EntryInfo),

|

||||

LastId { id: Hash },

|

||||

}

|

||||

|

||||

impl<W: Write + Send + 'static> AccountantSkel<W> {

|

||||

/// Create a new AccountantSkel that wraps the given Accountant.

|

||||

pub fn new(acc: Accountant, last_id: Hash, writer: W, historian: Historian) -> Self {

|

||||

AccountantSkel {

|

||||

acc,

|

||||

last_id,

|

||||

writer,

|

||||

historian,

|

||||

entry_info_subscribers: vec![],

|

||||

}

|

||||

}

|

||||

|

||||

fn notify_entry_info_subscribers(&mut self, entry: &Entry) {

|

||||

// TODO: No need to bind().

|

||||

let socket = UdpSocket::bind("127.0.0.1:0").expect("bind");

|

||||

|

||||

for addr in &self.entry_info_subscribers {

|

||||

let entry_info = EntryInfo {

|

||||

id: entry.id,

|

||||

num_hashes: entry.num_hashes,

|

||||

num_events: entry.events.len() as u64,

|

||||

};

|

||||

let data = serialize(&Response::EntryInfo(entry_info)).expect("serialize EntryInfo");

|

||||

let _res = socket.send_to(&data, addr);

|

||||

}

|

||||

}

|

||||

|

||||

/// Process any Entry items that have been published by the Historian.

|

||||

pub fn sync(&mut self) -> Hash {

|

||||

while let Ok(entry) = self.historian.receiver.try_recv() {

|

||||

self.last_id = entry.id;

|

||||

self.acc.register_entry_id(&self.last_id);

|

||||

writeln!(self.writer, "{}", serde_json::to_string(&entry).unwrap()).unwrap();

|

||||

self.notify_entry_info_subscribers(&entry);

|

||||

}

|

||||

self.last_id

|

||||

}

|

||||

|

||||

/// Process Request items sent by clients.

|

||||

pub fn process_request(

|

||||

&mut self,

|

||||

msg: Request,

|

||||

rsp_addr: SocketAddr,

|

||||

) -> Option<(Response, SocketAddr)> {

|

||||

match msg {

|

||||

Request::GetBalance { key } => {

|

||||

let val = self.acc.get_balance(&key);

|

||||

Some((Response::Balance { key, val }, rsp_addr))

|

||||

}

|

||||

Request::GetLastId => Some((Response::LastId { id: self.sync() }, rsp_addr)),

|

||||

Request::Transaction(_) => unreachable!(),

|

||||

Request::Subscribe { subscriptions } => {

|

||||

for subscription in subscriptions {

|

||||

match subscription {

|

||||

Subscription::EntryInfo => self.entry_info_subscribers.push(rsp_addr),

|

||||

}

|

||||

}

|

||||

None

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

fn recv_batch(recvr: &streamer::PacketReceiver) -> Result<Vec<SharedPackets>> {

|

||||

let timer = Duration::new(1, 0);

|

||||

let msgs = recvr.recv_timeout(timer)?;

|

||||

trace!("got msgs");

|

||||

let mut batch = vec![msgs];

|

||||

while let Ok(more) = recvr.try_recv() {

|

||||

trace!("got more msgs");

|

||||

batch.push(more);

|

||||

}

|

||||

info!("batch len {}", batch.len());

|

||||

Ok(batch)

|

||||

}

|

||||

|

||||

fn verify_batch(batch: Vec<SharedPackets>) -> Vec<Vec<(SharedPackets, Vec<u8>)>> {

|

||||

let chunk_size = max(1, (batch.len() + 3) / 4);

|

||||

let batches: Vec<_> = batch.chunks(chunk_size).map(|x| x.to_vec()).collect();

|

||||

batches

|

||||

.into_par_iter()

|

||||

.map(|batch| {

|

||||

let r = ecdsa::ed25519_verify(&batch);

|

||||

batch.into_iter().zip(r).collect()

|

||||

})

|

||||

.collect()

|

||||

}

|

||||

|

||||

fn verifier(

|

||||

recvr: &streamer::PacketReceiver,

|

||||

sendr: &Sender<Vec<(SharedPackets, Vec<u8>)>>,

|

||||

) -> Result<()> {

|

||||

let batch = Self::recv_batch(recvr)?;

|

||||

let verified_batches = Self::verify_batch(batch);

|

||||

for xs in verified_batches {

|

||||

sendr.send(xs)?;

|

||||

}

|

||||

Ok(())

|

||||

}

|

||||

|

||||

pub fn deserialize_packets(p: &packet::Packets) -> Vec<Option<(Request, SocketAddr)>> {

|

||||

p.packets

|

||||

.par_iter()

|

||||

.map(|x| {

|

||||

deserialize(&x.data[0..x.meta.size])

|

||||

.map(|req| (req, x.meta.addr()))

|

||||

.ok()

|

||||

})

|

||||

.collect()

|

||||

}

|

||||

|

||||

/// Split Request list into verified transactions and the rest

|

||||

fn partition_requests(

|

||||

req_vers: Vec<(Request, SocketAddr, u8)>,

|

||||

) -> (Vec<Transaction>, Vec<(Request, SocketAddr)>) {

|

||||

let mut trs = vec![];

|

||||

let mut reqs = vec![];

|

||||

for (msg, rsp_addr, verify) in req_vers {

|

||||

match msg {

|

||||

Request::Transaction(tr) => {

|

||||

if verify != 0 {

|

||||

trs.push(tr);

|

||||

}

|

||||

}

|

||||

_ => reqs.push((msg, rsp_addr)),

|

||||

}

|

||||

}

|

||||

(trs, reqs)

|

||||

}

|

||||

|

||||

fn process_packets(

|

||||

&mut self,

|

||||

req_vers: Vec<(Request, SocketAddr, u8)>,

|

||||

) -> Result<Vec<(Response, SocketAddr)>> {

|

||||

let (trs, reqs) = Self::partition_requests(req_vers);

|

||||

|

||||

// Process the transactions in parallel and then log the successful ones.

|

||||

for result in self.acc.process_verified_transactions(trs) {

|

||||

if let Ok(tr) = result {

|

||||

self.historian

|

||||

.sender

|

||||

.send(Signal::Event(Event::Transaction(tr)))?;

|

||||

}

|

||||

}

|

||||

|

||||

// Let validators know they should not attempt to process additional

|

||||

// transactions in parallel.

|

||||

self.historian.sender.send(Signal::Tick)?;

|

||||

|

||||

// Process the remaining requests serially.

|

||||

let rsps = reqs.into_iter()

|

||||

.filter_map(|(req, rsp_addr)| self.process_request(req, rsp_addr))

|

||||

.collect();

|

||||

|

||||

Ok(rsps)

|

||||

}

|

||||

|

||||

fn serialize_response(

|

||||

resp: Response,

|

||||

rsp_addr: SocketAddr,

|

||||

blob_recycler: &packet::BlobRecycler,

|

||||

) -> Result<packet::SharedBlob> {

|

||||

let blob = blob_recycler.allocate();

|

||||

{

|

||||

let mut b = blob.write().unwrap();

|

||||

let v = serialize(&resp)?;

|

||||

let len = v.len();

|

||||

b.data[..len].copy_from_slice(&v);

|

||||

b.meta.size = len;

|

||||

b.meta.set_addr(&rsp_addr);

|

||||

}

|

||||

Ok(blob)

|

||||

}

|

||||

|

||||

fn serialize_responses(

|

||||

rsps: Vec<(Response, SocketAddr)>,

|

||||

blob_recycler: &packet::BlobRecycler,

|

||||

) -> Result<VecDeque<packet::SharedBlob>> {

|

||||

let mut blobs = VecDeque::new();

|

||||

for (resp, rsp_addr) in rsps {

|

||||

blobs.push_back(Self::serialize_response(resp, rsp_addr, blob_recycler)?);

|

||||

}

|

||||

Ok(blobs)

|

||||

}

|

||||

|

||||

fn process(

|

||||

obj: &Arc<Mutex<AccountantSkel<W>>>,

|

||||

verified_receiver: &Receiver<Vec<(SharedPackets, Vec<u8>)>>,

|

||||

blob_sender: &streamer::BlobSender,

|

||||

packet_recycler: &packet::PacketRecycler,

|

||||

blob_recycler: &packet::BlobRecycler,

|

||||

) -> Result<()> {

|

||||

let timer = Duration::new(1, 0);

|

||||

let mms = verified_receiver.recv_timeout(timer)?;

|

||||

for (msgs, vers) in mms {

|

||||

let reqs = Self::deserialize_packets(&msgs.read().unwrap());

|

||||

let req_vers = reqs.into_iter()

|

||||

.zip(vers)

|

||||

.filter_map(|(req, ver)| req.map(|(msg, addr)| (msg, addr, ver)))

|

||||

.filter(|x| x.0.verify())

|

||||

.collect();

|

||||

let rsps = obj.lock().unwrap().process_packets(req_vers)?;

|

||||

let blobs = Self::serialize_responses(rsps, blob_recycler)?;

|

||||

if !blobs.is_empty() {

|

||||

//don't wake up the other side if there is nothing

|

||||