Compare commits

275 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

| e683c34a89 | |||

| 54e4f75081 | |||

| 9f256f0929 | |||

| ef169a6652 | |||

| eaec25f940 | |||

| 6a87d8975c | |||

| b8cf5f9427 | |||

| 2f1e585446 | |||

| f9309b46aa | |||

| 22f5985f1b | |||

| c59c38e50e | |||

| 232e1bb8a3 | |||

| 1fbb34620c | |||

| 89f5b803c9 | |||

| 55179101cd | |||

| 132495b1fc | |||

| a03d7bf5cd | |||

| 3bf225e85f | |||

| cc2bb290c4 | |||

| 878ca8c5c5 | |||

| 4bc41d81ee | |||

| f6ca176fc8 | |||

| 0bec360a31 | |||

| 04f30710c5 | |||

| 98c0a2af87 | |||

| 9db42c1769 | |||

| 849bced602 | |||

| 27f29019ef | |||

| 8642a41f2b | |||

| bf902ef5bc | |||

| 7656b55c22 | |||

| 7d3d4b9443 | |||

| 15c093c5e2 | |||

| 116166f62d | |||

| 26b19dde75 | |||

| c8ddc68f13 | |||

| 7c9681007c | |||

| 13206e4976 | |||

| 2f18302d32 | |||

| ddb21d151d | |||

| c64a9fb456 | |||

| ee19b4f86e | |||

| 14239e584f | |||

| 112aecf6eb | |||

| c1783d77d7 | |||

| f089abb3c5 | |||

| 8e551f5e32 | |||

| 290960c3b5 | |||

| 62af09adbe | |||

| e39c0b34e5 | |||

| 8ad90807ee | |||

| 533b3170a7 | |||

| 7732f3f5fb | |||

| f52f02a434 | |||

| 4d7d4d673e | |||

| 9a437f0d38 | |||

| c385f8bb6e | |||

| fa44be2a9d | |||

| 117ab0c141 | |||

| 7488d19ae6 | |||

| 60524ad5f2 | |||

| fad7ff8bf0 | |||

| 383d445ba1 | |||

| 803dcb0800 | |||

| fde320e2f2 | |||

| 8ea97141ea | |||

| 9f232bac58 | |||

| 8295cc11c0 | |||

| 70f80adb9a | |||

| 9a7cac1e07 | |||

| c584a25ec9 | |||

| bff32bf7bc | |||

| d0e7450389 | |||

| 4da89ac8a9 | |||

| f7032f7d9a | |||

| 7c7e3931a0 | |||

| 6be3d62d89 | |||

| 6f509a8a1e | |||

| 4379fabf16 | |||

| 6b66e1a077 | |||

| c11a3e0fdc | |||

| 3418033c55 | |||

| caa9a846ed | |||

| 8ee76bcea0 | |||

| 47325cbe01 | |||

| e0c8417297 | |||

| 9238ee9572 | |||

| 64af37e0cd | |||

| 9f9b79f30b | |||

| 265f41887f | |||

| 4f09e5d04c | |||

| 434f321336 | |||

| f4e0d1be58 | |||

| e5bae0604b | |||

| e7da083c31 | |||

| 367c32dabe | |||

| e054238af6 | |||

| e8faf6d59a | |||

| baa4ea3cd8 | |||

| 75ef0f0329 | |||

| 65185c0011 | |||

| eb94613d7d | |||

| 67f4f4fb49 | |||

| a7ecf4ac4c | |||

| 45765b625a | |||

| aa0a184ebe | |||

| 069f9f0d5d | |||

| c82b520ea8 | |||

| 9d6e5bde4a | |||

| 0eb3669fbf | |||

| 30449b6054 | |||

| f5f71a19b8 | |||

| 0135971769 | |||

| 8579795c40 | |||

| 9d77fd7eec | |||

| 8c40d1bd72 | |||

| 7a0bc7d888 | |||

| 1e07014f86 | |||

| 49281b24e5 | |||

| a8b1980de4 | |||

| b8cd5f0482 | |||

| cc9f0788aa | |||

| 209910299d | |||

| 17926ff5d9 | |||

| 957fb0667c | |||

| 8d17aed785 | |||

| 7ef8d5ddde | |||

| 9930a2e167 | |||

| a86be9ebf2 | |||

| ad6665c8b6 | |||

| 923162ae9d | |||

| dd2bd67049 | |||

| d500bbff04 | |||

| e759bd1a99 | |||

| 94daf4cea4 | |||

| 2379792e0a | |||

| dba6d7a8a6 | |||

| 086c206b76 | |||

| 5dd567deef | |||

| b6d8f737ca | |||

| 491ba9da84 | |||

| a420a9293f | |||

| c1bc5f6a07 | |||

| 9834c251d0 | |||

| 54340ed4c6 | |||

| 96a0a9202c | |||

| a4c081d3a1 | |||

| d1b6206858 | |||

| 0eb6849fe3 | |||

| b725fdb093 | |||

| 1436bb1ff2 | |||

| 5a44c36b1f | |||

| 5d990502cb | |||

| 64735da716 | |||

| 95b82aa6dc | |||

| f09952f3d7 | |||

| b98e04dc56 | |||

| cb436250da | |||

| 4376032e3a | |||

| c231331e05 | |||

| 624c151ca2 | |||

| 5d0356f74b | |||

| b019416518 | |||

| 4fcd9e3bd6 | |||

| 66bf889c39 | |||

| a2811842c8 | |||

| 1929601425 | |||

| 282afee47e | |||

| e701ccc949 | |||

| 6543497c17 | |||

| 7d9af5a937 | |||

| 720c54a5bb | |||

| 5dca3c41f2 | |||

| 929546f60b | |||

| cb0ce9986c | |||

| 064eba00fd | |||

| a4336a39d6 | |||

| 298989c4b9 | |||

| 48c28c2267 | |||

| d76ecbc9c9 | |||

| 79fb9c00aa | |||

| c9e03f37ce | |||

| aa5f1699a7 | |||

| e1e9126d03 | |||

| 672a4b3723 | |||

| 955f76baab | |||

| 7da8a5e2d1 | |||

| ff82fbf112 | |||

| 8503a0a58f | |||

| b1e9512f44 | |||

| 608def9c78 | |||

| bcb21bc1d8 | |||

| f63096620a | |||

| 9b26892bae | |||

| 572475ce14 | |||

| 876d7995e1 | |||

| b8655e30d4 | |||

| 7cf0d55546 | |||

| ce60b960c0 | |||

| cebcb5b92d | |||

| 11a0f96f5e | |||

| 74ebaf1744 | |||

| f7496ea6d1 | |||

| bebba7dc1f | |||

| afb2bf442c | |||

| c7de48c982 | |||

| f906112c03 | |||

| 8ef864fb39 | |||

| 1c9b5ab53c | |||

| c10faae3b5 | |||

| 2104dd5a0a | |||

| fbe64037db | |||

| d8c50b150c | |||

| 8871bb2d8e | |||

| a148454376 | |||

| be518b569b | |||

| c998fbe2ae | |||

| 9f12cd0c09 | |||

| 0d0fee1ca1 | |||

| a0410c4677 | |||

| 8fe464cfa3 | |||

| 3e2d6d9e8b | |||

| 32d677787b | |||

| dfd1c4eab3 | |||

| 36bb1f989d | |||

| 684f4c59e0 | |||

| 1b77e8a69a | |||

| 662e10c3e0 | |||

| c935fdb12f | |||

| 9e16937914 | |||

| f705202381 | |||

| f5532ad9f7 | |||

| 570e71f050 | |||

| c9cc4b4369 | |||

| 7111aa3b18 | |||

| 12eba4bcc7 | |||

| 4610de8fdd | |||

| 3fcc2dd944 | |||

| 8299bae2d4 | |||

| 604ccf7552 | |||

| f3dd47948a | |||

| c3bb207488 | |||

| 9009d1bfb3 | |||

| fa4d9e8bcb | |||

| 34b77efc87 | |||

| 5ca0ccbcd2 | |||

| 6aa4e52480 | |||

| f98e9a2ad7 | |||

| c6134cc25b | |||

| 0443b39264 | |||

| 8b0b8efbcb | |||

| 97449cee43 | |||

| ab5252c750 | |||

| 05a27cb34d | |||

| b02eab57d2 | |||

| b8d52cc3e4 | |||

| 7d9bab9508 | |||

| 944181a30e | |||

| d8dd50505a | |||

| d78082f5e4 | |||

| 08e501e57b | |||

| 29a607427d | |||

| afb830c91f | |||

| c1326ac3d5 | |||

| 513a1adf57 | |||

| 7871b38c80 | |||

| b34d2d7dee | |||

| d7dfa8c22d | |||

| 8df274f0af | |||

| 07c4ebb7f2 | |||

| 49605b257d | |||

| fa4e232d73 | |||

| bd84cf6586 | |||

| 6e37f70d55 | |||

| d97112d7f0 |

3

.gitignore

vendored

3

.gitignore

vendored

@ -1,4 +1,3 @@

|

||||

|

||||

Cargo.lock

|

||||

/target/

|

||||

**/*.rs.bk

|

||||

Cargo.lock

|

||||

|

||||

@ -9,7 +9,7 @@ matrix:

|

||||

- rust: stable

|

||||

- rust: nightly

|

||||

env:

|

||||

- FEATURES='asm,unstable'

|

||||

- FEATURES='unstable'

|

||||

before_script: |

|

||||

export PATH="$PATH:$HOME/.cargo/bin"

|

||||

rustup component add rustfmt-preview

|

||||

|

||||

55

Cargo.toml

55

Cargo.toml

@ -1,30 +1,57 @@

|

||||

[package]

|

||||

name = "silk"

|

||||

description = "A silky smooth implementation of the Loom architecture"

|

||||

version = "0.2.1"

|

||||

documentation = "https://docs.rs/silk"

|

||||

name = "solana"

|

||||

description = "High Performance Blockchain"

|

||||

version = "0.4.0"

|

||||

documentation = "https://docs.rs/solana"

|

||||

homepage = "http://loomprotocol.com/"

|

||||

repository = "https://github.com/loomprotocol/silk"

|

||||

repository = "https://github.com/solana-labs/solana"

|

||||

authors = [

|

||||

"Anatoly Yakovenko <aeyakovenko@gmail.com>",

|

||||

"Greg Fitzgerald <garious@gmail.com>",

|

||||

"Anatoly Yakovenko <anatoly@solana.co>",

|

||||

"Greg Fitzgerald <greg@solana.co>",

|

||||

]

|

||||

license = "Apache-2.0"

|

||||

|

||||

[[bin]]

|

||||

name = "silk-demo"

|

||||

path = "src/bin/demo.rs"

|

||||

name = "solana-historian-demo"

|

||||

path = "src/bin/historian-demo.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-client-demo"

|

||||

path = "src/bin/client-demo.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-testnode"

|

||||

path = "src/bin/testnode.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-genesis"

|

||||

path = "src/bin/genesis.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-genesis-demo"

|

||||

path = "src/bin/genesis-demo.rs"

|

||||

|

||||

[[bin]]

|

||||

name = "solana-mint"

|

||||

path = "src/bin/mint.rs"

|

||||

|

||||

[badges]

|

||||

codecov = { repository = "loomprotocol/silk", branch = "master", service = "github" }

|

||||

codecov = { repository = "solana-labs/solana", branch = "master", service = "github" }

|

||||

|

||||

[features]

|

||||

unstable = []

|

||||

asm = ["sha2-asm"]

|

||||

ipv6 = []

|

||||

|

||||

[dependencies]

|

||||

rayon = "1.0.0"

|

||||

itertools = "0.7.6"

|

||||

sha2 = "0.7.0"

|

||||

sha2-asm = {version="0.3", optional=true}

|

||||

digest = "0.7.2"

|

||||

generic-array = { version = "0.9.0", default-features = false, features = ["serde"] }

|

||||

serde = "1.0.27"

|

||||

serde_derive = "1.0.27"

|

||||

serde_json = "1.0.10"

|

||||

ring = "0.12.1"

|

||||

untrusted = "0.5.1"

|

||||

bincode = "1.0.0"

|

||||

chrono = { version = "0.4.0", features = ["serde"] }

|

||||

log = "^0.4.1"

|

||||

matches = "^0.1.6"

|

||||

|

||||

123

README.md

123

README.md

@ -1,69 +1,80 @@

|

||||

[](https://crates.io/crates/silk)

|

||||

[](https://docs.rs/silk)

|

||||

[](https://travis-ci.org/loomprotocol/silk)

|

||||

[](https://codecov.io/gh/loomprotocol/silk)

|

||||

[](https://crates.io/crates/solana)

|

||||

[](https://docs.rs/solana)

|

||||

[](https://travis-ci.org/solana-labs/solana)

|

||||

[](https://codecov.io/gh/solana-labs/solana)

|

||||

|

||||

# Silk, a silky smooth implementation of the Loom specification

|

||||

Disclaimer

|

||||

===

|

||||

|

||||

Loom is a new achitecture for a high performance blockchain. Its whitepaper boasts a theoretical

|

||||

throughput of 710k transactions per second on a 1 gbps network. The specification is implemented

|

||||

in two git repositories. Reserach is performed in the loom repository. That work drives the

|

||||

Loom specification forward. This repository, on the other hand, aims to implement the specification

|

||||

as-is. We care a great deal about quality, clarity and short learning curve. We avoid the use

|

||||

of `unsafe` Rust and write tests for *everything*. Optimizations are only added when

|

||||

corresponding benchmarks are also added that demonstrate real performance boots. We expect the

|

||||

feature set here will always be a ways behind the loom repo, but that this is an implementation

|

||||

you can take to the bank, literally.

|

||||

All claims, content, designs, algorithms, estimates, roadmaps, specifications, and performance measurements described in this project are done with the author's best effort. It is up to the reader to check and validate their accuracy and truthfulness. Furthermore nothing in this project constitutes a solicitation for investment.

|

||||

|

||||

# Usage

|

||||

Solana: High Performance Blockchain

|

||||

===

|

||||

|

||||

Add the latest [silk package](https://crates.io/crates/silk) to the `[dependencies]` section

|

||||

of your Cargo.toml.

|

||||

Solana™ is a new architecture for a high performance blockchain. It aims to support

|

||||

over 700 thousand transactions per second on a gigabit network.

|

||||

|

||||

Create a *Historian* and send it *events* to generate an *event log*, where each log *entry*

|

||||

is tagged with the historian's latest *hash*. Then ensure the order of events was not tampered

|

||||

with by verifying each entry's hash can be generated from the hash in the previous entry:

|

||||

Running the demo

|

||||

===

|

||||

|

||||

```rust

|

||||

extern crate silk;

|

||||

First, install Rust's package manager Cargo.

|

||||

|

||||

use silk::historian::Historian;

|

||||

use silk::log::{verify_slice, Entry, Event, Sha256Hash};

|

||||

use std::{thread, time};

|

||||

use std::sync::mpsc::SendError;

|

||||

|

||||

fn create_log(hist: &Historian) -> Result<(), SendError<Event>> {

|

||||

hist.sender.send(Event::Tick)?;

|

||||

thread::sleep(time::Duration::new(0, 100_000));

|

||||

hist.sender.send(Event::UserDataKey(0xdeadbeef))?;

|

||||

thread::sleep(time::Duration::new(0, 100_000));

|

||||

hist.sender.send(Event::Tick)?;

|

||||

Ok(())

|

||||

}

|

||||

|

||||

fn main() {

|

||||

let seed = Sha256Hash::default();

|

||||

let hist = Historian::new(&seed);

|

||||

create_log(&hist).expect("send error");

|

||||

drop(hist.sender);

|

||||

let entries: Vec<Entry> = hist.receiver.iter().collect();

|

||||

for entry in &entries {

|

||||

println!("{:?}", entry);

|

||||

}

|

||||

assert!(verify_slice(&entries, &seed));

|

||||

}

|

||||

```bash

|

||||

$ curl https://sh.rustup.rs -sSf | sh

|

||||

$ source $HOME/.cargo/env

|

||||

```

|

||||

|

||||

Running the program should produce a log similar to:

|

||||

The testnode server is initialized with a ledger from stdin and

|

||||

generates new ledger entries on stdout. To create the input ledger, we'll need

|

||||

to create *the mint* and use it to generate a *genesis ledger*. It's done in

|

||||

two steps because the mint.json file contains a private key that will be

|

||||

used later in this demo.

|

||||

|

||||

```rust

|

||||

Entry { num_hashes: 0, end_hash: [0, ...], event: Tick }

|

||||

Entry { num_hashes: 6, end_hash: [67, ...], event: UserDataKey(3735928559) }

|

||||

Entry { num_hashes: 5, end_hash: [123, ...], event: Tick }

|

||||

```bash

|

||||

$ echo 1000000000 | cargo run --release --bin solana-mint | tee mint.json

|

||||

$ cat mint.json | cargo run --release --bin solana-genesis | tee genesis.log

|

||||

```

|

||||

|

||||

Now you can start the server:

|

||||

|

||||

# Developing

|

||||

```bash

|

||||

$ cat genesis.log | cargo run --release --bin solana-testnode | tee transactions0.log

|

||||

```

|

||||

|

||||

Then, in a separate shell, let's execute some transactions. Note we pass in

|

||||

the JSON configuration file here, not the genesis ledger.

|

||||

|

||||

```bash

|

||||

$ cat mint.json | cargo run --release --bin solana-client-demo

|

||||

```

|

||||

|

||||

Now kill the server with Ctrl-C, and take a look at the ledger. You should

|

||||

see something similar to:

|

||||

|

||||

```json

|

||||

{"num_hashes":27,"id":[0, "..."],"event":"Tick"}

|

||||

{"num_hashes":3,"id":[67, "..."],"event":{"Transaction":{"tokens":42}}}

|

||||

{"num_hashes":27,"id":[0, "..."],"event":"Tick"}

|

||||

```

|

||||

|

||||

Now restart the server from where we left off. Pass it both the genesis ledger, and

|

||||

the transaction ledger.

|

||||

|

||||

```bash

|

||||

$ cat genesis.log transactions0.log | cargo run --release --bin solana-testnode | tee transactions1.log

|

||||

```

|

||||

|

||||

Lastly, run the client demo again, and verify that all funds were spent in the

|

||||

previous round, and so no additional transactions are added.

|

||||

|

||||

```bash

|

||||

$ cat mint.json | cargo run --release --bin solana-client-demo

|

||||

```

|

||||

|

||||

Stop the server again, and verify there are only Tick entries, and no Transaction entries.

|

||||

|

||||

Developing

|

||||

===

|

||||

|

||||

Building

|

||||

---

|

||||

@ -79,8 +90,8 @@ $ rustup component add rustfmt-preview

|

||||

Download the source code:

|

||||

|

||||

```bash

|

||||

$ git clone https://github.com/loomprotocol/silk.git

|

||||

$ cd silk

|

||||

$ git clone https://github.com/solana-labs/solana.git

|

||||

$ cd solana

|

||||

```

|

||||

|

||||

Testing

|

||||

@ -104,5 +115,5 @@ $ rustup install nightly

|

||||

Run the benchmarks:

|

||||

|

||||

```bash

|

||||

$ cargo +nightly bench --features="asm,unstable"

|

||||

$ cargo +nightly bench --features="unstable"

|

||||

```

|

||||

|

||||

15

doc/consensus.msc

Normal file

15

doc/consensus.msc

Normal file

@ -0,0 +1,15 @@

|

||||

msc {

|

||||

client,leader,verifier_a,verifier_b,verifier_c;

|

||||

|

||||

client=>leader [ label = "SUBMIT" ] ;

|

||||

leader=>client [ label = "CONFIRMED" ] ;

|

||||

leader=>verifier_a [ label = "CONFIRMED" ] ;

|

||||

leader=>verifier_b [ label = "CONFIRMED" ] ;

|

||||

leader=>verifier_c [ label = "CONFIRMED" ] ;

|

||||

verifier_a=>leader [ label = "VERIFIED" ] ;

|

||||

verifier_b=>leader [ label = "VERIFIED" ] ;

|

||||

leader=>client [ label = "FINALIZED" ] ;

|

||||

leader=>verifier_a [ label = "FINALIZED" ] ;

|

||||

leader=>verifier_b [ label = "FINALIZED" ] ;

|

||||

leader=>verifier_c [ label = "FINALIZED" ] ;

|

||||

}

|

||||

65

doc/historian.md

Normal file

65

doc/historian.md

Normal file

@ -0,0 +1,65 @@

|

||||

The Historian

|

||||

===

|

||||

|

||||

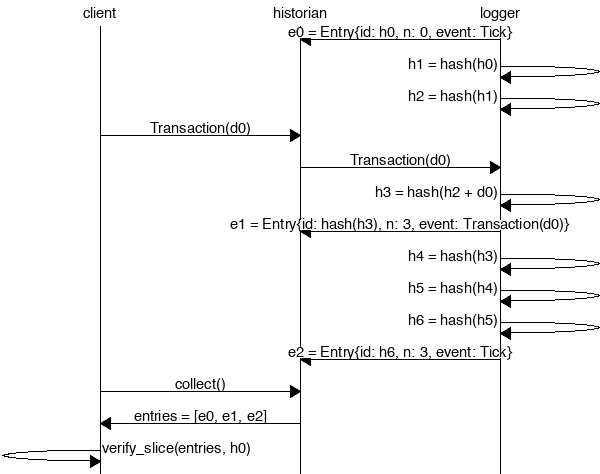

Create a *Historian* and send it *events* to generate an *event log*, where each *entry*

|

||||

is tagged with the historian's latest *hash*. Then ensure the order of events was not tampered

|

||||

with by verifying each entry's hash can be generated from the hash in the previous entry:

|

||||

|

||||

|

||||

|

||||

```rust

|

||||

extern crate solana;

|

||||

|

||||

use solana::historian::Historian;

|

||||

use solana::ledger::{verify_slice, Entry, Hash};

|

||||

use solana::event::{generate_keypair, get_pubkey, sign_claim_data, Event};

|

||||

use std::thread::sleep;

|

||||

use std::time::Duration;

|

||||

use std::sync::mpsc::SendError;

|

||||

|

||||

fn create_ledger(hist: &Historian<Hash>) -> Result<(), SendError<Event<Hash>>> {

|

||||

sleep(Duration::from_millis(15));

|

||||

let tokens = 42;

|

||||

let keypair = generate_keypair();

|

||||

let event0 = Event::new_claim(get_pubkey(&keypair), tokens, sign_claim_data(&tokens, &keypair));

|

||||

hist.sender.send(event0)?;

|

||||

sleep(Duration::from_millis(10));

|

||||

Ok(())

|

||||

}

|

||||

|

||||

fn main() {

|

||||

let seed = Hash::default();

|

||||

let hist = Historian::new(&seed, Some(10));

|

||||

create_ledger(&hist).expect("send error");

|

||||

drop(hist.sender);

|

||||

let entries: Vec<Entry<Hash>> = hist.receiver.iter().collect();

|

||||

for entry in &entries {

|

||||

println!("{:?}", entry);

|

||||

}

|

||||

// Proof-of-History: Verify the historian learned about the events

|

||||

// in the same order they appear in the vector.

|

||||

assert!(verify_slice(&entries, &seed));

|

||||

}

|

||||

```

|

||||

|

||||

Running the program should produce a ledger similar to:

|

||||

|

||||

```rust

|

||||

Entry { num_hashes: 0, id: [0, ...], event: Tick }

|

||||

Entry { num_hashes: 3, id: [67, ...], event: Transaction { tokens: 42 } }

|

||||

Entry { num_hashes: 3, id: [123, ...], event: Tick }

|

||||

```

|

||||

|

||||

Proof-of-History

|

||||

---

|

||||

|

||||

Take note of the last line:

|

||||

|

||||

```rust

|

||||

assert!(verify_slice(&entries, &seed));

|

||||

```

|

||||

|

||||

[It's a proof!](https://en.wikipedia.org/wiki/Curry–Howard_correspondence) For each entry returned by the

|

||||

historian, we can verify that `id` is the result of applying a sha256 hash to the previous `id`

|

||||

exactly `num_hashes` times, and then hashing then event data on top of that. Because the event data is

|

||||

included in the hash, the events cannot be reordered without regenerating all the hashes.

|

||||

18

doc/historian.msc

Normal file

18

doc/historian.msc

Normal file

@ -0,0 +1,18 @@

|

||||

msc {

|

||||

client,historian,recorder;

|

||||

|

||||

recorder=>historian [ label = "e0 = Entry{id: h0, n: 0, event: Tick}" ] ;

|

||||

recorder=>recorder [ label = "h1 = hash(h0)" ] ;

|

||||

recorder=>recorder [ label = "h2 = hash(h1)" ] ;

|

||||

client=>historian [ label = "Transaction(d0)" ] ;

|

||||

historian=>recorder [ label = "Transaction(d0)" ] ;

|

||||

recorder=>recorder [ label = "h3 = hash(h2 + d0)" ] ;

|

||||

recorder=>historian [ label = "e1 = Entry{id: hash(h3), n: 3, event: Transaction(d0)}" ] ;

|

||||

recorder=>recorder [ label = "h4 = hash(h3)" ] ;

|

||||

recorder=>recorder [ label = "h5 = hash(h4)" ] ;

|

||||

recorder=>recorder [ label = "h6 = hash(h5)" ] ;

|

||||

recorder=>historian [ label = "e2 = Entry{id: h6, n: 3, event: Tick}" ] ;

|

||||

client=>historian [ label = "collect()" ] ;

|

||||

historian=>client [ label = "entries = [e0, e1, e2]" ] ;

|

||||

client=>client [ label = "verify_slice(entries, h0)" ] ;

|

||||

}

|

||||

399

src/accountant.rs

Normal file

399

src/accountant.rs

Normal file

@ -0,0 +1,399 @@

|

||||

//! The `accountant` module tracks client balances, and the progress of pending

|

||||

//! transactions. It offers a high-level public API that signs transactions

|

||||

//! on behalf of the caller, and a private low-level API for when they have

|

||||

//! already been signed and verified.

|

||||

|

||||

use chrono::prelude::*;

|

||||

use entry::Entry;

|

||||

use event::Event;

|

||||

use hash::Hash;

|

||||

use historian::Historian;

|

||||

use mint::Mint;

|

||||

use plan::{Plan, Witness};

|

||||

use recorder::Signal;

|

||||

use signature::{KeyPair, PublicKey, Signature};

|

||||

use std::collections::hash_map::Entry::Occupied;

|

||||

use std::collections::{HashMap, HashSet};

|

||||

use std::result;

|

||||

use std::sync::mpsc::SendError;

|

||||

use transaction::Transaction;

|

||||

|

||||

#[derive(Debug, PartialEq, Eq)]

|

||||

pub enum AccountingError {

|

||||

InsufficientFunds,

|

||||

InvalidTransfer,

|

||||

InvalidTransferSignature,

|

||||

SendError,

|

||||

}

|

||||

|

||||

pub type Result<T> = result::Result<T, AccountingError>;

|

||||

|

||||

/// Commit funds to the 'to' party.

|

||||

fn complete_transaction(balances: &mut HashMap<PublicKey, i64>, plan: &Plan) {

|

||||

if let Plan::Pay(ref payment) = *plan {

|

||||

*balances.entry(payment.to).or_insert(0) += payment.tokens;

|

||||

}

|

||||

}

|

||||

|

||||

pub struct Accountant {

|

||||

pub historian: Historian,

|

||||

pub balances: HashMap<PublicKey, i64>,

|

||||

pub first_id: Hash,

|

||||

pending: HashMap<Signature, Plan>,

|

||||

time_sources: HashSet<PublicKey>,

|

||||

last_time: DateTime<Utc>,

|

||||

}

|

||||

|

||||

impl Accountant {

|

||||

/// Create an Accountant using an existing ledger.

|

||||

pub fn new_from_entries<I>(entries: I, ms_per_tick: Option<u64>) -> Self

|

||||

where

|

||||

I: IntoIterator<Item = Entry>,

|

||||

{

|

||||

let mut entries = entries.into_iter();

|

||||

|

||||

// The first item in the ledger is required to be an entry with zero num_hashes,

|

||||

// which implies its id can be used as the ledger's seed.

|

||||

let entry0 = entries.next().unwrap();

|

||||

let start_hash = entry0.id;

|

||||

|

||||

let hist = Historian::new(&start_hash, ms_per_tick);

|

||||

let mut acc = Accountant {

|

||||

historian: hist,

|

||||

balances: HashMap::new(),

|

||||

first_id: start_hash,

|

||||

pending: HashMap::new(),

|

||||

time_sources: HashSet::new(),

|

||||

last_time: Utc.timestamp(0, 0),

|

||||

};

|

||||

|

||||

// The second item in the ledger is a special transaction where the to and from

|

||||

// fields are the same. That entry should be treated as a deposit, not a

|

||||

// transfer to oneself.

|

||||

let entry1 = entries.next().unwrap();

|

||||

acc.process_verified_event(&entry1.events[0], true).unwrap();

|

||||

|

||||

for entry in entries {

|

||||

for event in entry.events {

|

||||

acc.process_verified_event(&event, false).unwrap();

|

||||

}

|

||||

}

|

||||

acc

|

||||

}

|

||||

|

||||

/// Create an Accountant with only a Mint. Typically used by unit tests.

|

||||

pub fn new(mint: &Mint, ms_per_tick: Option<u64>) -> Self {

|

||||

Self::new_from_entries(mint.create_entries(), ms_per_tick)

|

||||

}

|

||||

|

||||

fn is_deposit(allow_deposits: bool, from: &PublicKey, plan: &Plan) -> bool {

|

||||

if let Plan::Pay(ref payment) = *plan {

|

||||

allow_deposits && *from == payment.to

|

||||

} else {

|

||||

false

|

||||

}

|

||||

}

|

||||

|

||||

/// Process and log the given Transaction.

|

||||

pub fn log_verified_transaction(&mut self, tr: Transaction) -> Result<()> {

|

||||

if self.get_balance(&tr.from).unwrap_or(0) < tr.tokens {

|

||||

return Err(AccountingError::InsufficientFunds);

|

||||

}

|

||||

|

||||

self.process_verified_transaction(&tr, false)?;

|

||||

if let Err(SendError(_)) = self.historian

|

||||

.sender

|

||||

.send(Signal::Event(Event::Transaction(tr)))

|

||||

{

|

||||

return Err(AccountingError::SendError);

|

||||

}

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Verify and process the given Transaction.

|

||||

pub fn log_transaction(&mut self, tr: Transaction) -> Result<()> {

|

||||

if !tr.verify() {

|

||||

return Err(AccountingError::InvalidTransfer);

|

||||

}

|

||||

|

||||

self.log_verified_transaction(tr)

|

||||

}

|

||||

|

||||

/// Process a Transaction that has already been verified.

|

||||

fn process_verified_transaction(

|

||||

self: &mut Self,

|

||||

tr: &Transaction,

|

||||

allow_deposits: bool,

|

||||

) -> Result<()> {

|

||||

if !self.historian.reserve_signature(&tr.sig) {

|

||||

return Err(AccountingError::InvalidTransferSignature);

|

||||

}

|

||||

|

||||

if !Self::is_deposit(allow_deposits, &tr.from, &tr.plan) {

|

||||

if let Some(x) = self.balances.get_mut(&tr.from) {

|

||||

*x -= tr.tokens;

|

||||

}

|

||||

}

|

||||

|

||||

let mut plan = tr.plan.clone();

|

||||

plan.apply_witness(&Witness::Timestamp(self.last_time));

|

||||

|

||||

if plan.is_complete() {

|

||||

complete_transaction(&mut self.balances, &plan);

|

||||

} else {

|

||||

self.pending.insert(tr.sig, plan);

|

||||

}

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Process a Witness Signature that has already been verified.

|

||||

fn process_verified_sig(&mut self, from: PublicKey, tx_sig: Signature) -> Result<()> {

|

||||

if let Occupied(mut e) = self.pending.entry(tx_sig) {

|

||||

e.get_mut().apply_witness(&Witness::Signature(from));

|

||||

if e.get().is_complete() {

|

||||

complete_transaction(&mut self.balances, e.get());

|

||||

e.remove_entry();

|

||||

}

|

||||

};

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Process a Witness Timestamp that has already been verified.

|

||||

fn process_verified_timestamp(&mut self, from: PublicKey, dt: DateTime<Utc>) -> Result<()> {

|

||||

// If this is the first timestamp we've seen, it probably came from the genesis block,

|

||||

// so we'll trust it.

|

||||

if self.last_time == Utc.timestamp(0, 0) {

|

||||

self.time_sources.insert(from);

|

||||

}

|

||||

|

||||

if self.time_sources.contains(&from) {

|

||||

if dt > self.last_time {

|

||||

self.last_time = dt;

|

||||

}

|

||||

} else {

|

||||

return Ok(());

|

||||

}

|

||||

|

||||

// Check to see if any timelocked transactions can be completed.

|

||||

let mut completed = vec![];

|

||||

for (key, plan) in &mut self.pending {

|

||||

plan.apply_witness(&Witness::Timestamp(self.last_time));

|

||||

if plan.is_complete() {

|

||||

complete_transaction(&mut self.balances, plan);

|

||||

completed.push(key.clone());

|

||||

}

|

||||

}

|

||||

|

||||

for key in completed {

|

||||

self.pending.remove(&key);

|

||||

}

|

||||

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Process an Transaction or Witness that has already been verified.

|

||||

fn process_verified_event(self: &mut Self, event: &Event, allow_deposits: bool) -> Result<()> {

|

||||

match *event {

|

||||

Event::Transaction(ref tr) => self.process_verified_transaction(tr, allow_deposits),

|

||||

Event::Signature { from, tx_sig, .. } => self.process_verified_sig(from, tx_sig),

|

||||

Event::Timestamp { from, dt, .. } => self.process_verified_timestamp(from, dt),

|

||||

}

|

||||

}

|

||||

|

||||

/// Create, sign, and process a Transaction from `keypair` to `to` of

|

||||

/// `n` tokens where `last_id` is the last Entry ID observed by the client.

|

||||

pub fn transfer(

|

||||

self: &mut Self,

|

||||

n: i64,

|

||||

keypair: &KeyPair,

|

||||

to: PublicKey,

|

||||

last_id: Hash,

|

||||

) -> Result<Signature> {

|

||||

let tr = Transaction::new(keypair, to, n, last_id);

|

||||

let sig = tr.sig;

|

||||

self.log_transaction(tr).map(|_| sig)

|

||||

}

|

||||

|

||||

/// Create, sign, and process a postdated Transaction from `keypair`

|

||||

/// to `to` of `n` tokens on `dt` where `last_id` is the last Entry ID

|

||||

/// observed by the client.

|

||||

pub fn transfer_on_date(

|

||||

self: &mut Self,

|

||||

n: i64,

|

||||

keypair: &KeyPair,

|

||||

to: PublicKey,

|

||||

dt: DateTime<Utc>,

|

||||

last_id: Hash,

|

||||

) -> Result<Signature> {

|

||||

let tr = Transaction::new_on_date(keypair, to, dt, n, last_id);

|

||||

let sig = tr.sig;

|

||||

self.log_transaction(tr).map(|_| sig)

|

||||

}

|

||||

|

||||

pub fn get_balance(self: &Self, pubkey: &PublicKey) -> Option<i64> {

|

||||

self.balances.get(pubkey).cloned()

|

||||

}

|

||||

}

|

||||

|

||||

#[cfg(test)]

|

||||

mod tests {

|

||||

use super::*;

|

||||

use recorder::ExitReason;

|

||||

use signature::KeyPairUtil;

|

||||

|

||||

#[test]

|

||||

fn test_accountant() {

|

||||

let alice = Mint::new(10_000);

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let mut acc = Accountant::new(&alice, Some(2));

|

||||

acc.transfer(1_000, &alice.keypair(), bob_pubkey, alice.seed())

|

||||

.unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap(), 1_000);

|

||||

|

||||

acc.transfer(500, &alice.keypair(), bob_pubkey, alice.seed())

|

||||

.unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap(), 1_500);

|

||||

|

||||

drop(acc.historian.sender);

|

||||

assert_eq!(

|

||||

acc.historian.thread_hdl.join().unwrap(),

|

||||

ExitReason::RecvDisconnected

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_invalid_transfer() {

|

||||

let alice = Mint::new(11_000);

|

||||

let mut acc = Accountant::new(&alice, Some(2));

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

acc.transfer(1_000, &alice.keypair(), bob_pubkey, alice.seed())

|

||||

.unwrap();

|

||||

assert_eq!(

|

||||

acc.transfer(10_001, &alice.keypair(), bob_pubkey, alice.seed()),

|

||||

Err(AccountingError::InsufficientFunds)

|

||||

);

|

||||

|

||||

let alice_pubkey = alice.keypair().pubkey();

|

||||

assert_eq!(acc.get_balance(&alice_pubkey).unwrap(), 10_000);

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap(), 1_000);

|

||||

|

||||

drop(acc.historian.sender);

|

||||

assert_eq!(

|

||||

acc.historian.thread_hdl.join().unwrap(),

|

||||

ExitReason::RecvDisconnected

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_overspend_attack() {

|

||||

let alice = Mint::new(1);

|

||||

let mut acc = Accountant::new(&alice, None);

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let mut tr = Transaction::new(&alice.keypair(), bob_pubkey, 1, alice.seed());

|

||||

if let Plan::Pay(ref mut payment) = tr.plan {

|

||||

payment.tokens = 2; // <-- attack!

|

||||

}

|

||||

assert_eq!(

|

||||

acc.log_transaction(tr.clone()),

|

||||

Err(AccountingError::InvalidTransfer)

|

||||

);

|

||||

|

||||

// Also, ensure all branchs of the plan spend all tokens

|

||||

if let Plan::Pay(ref mut payment) = tr.plan {

|

||||

payment.tokens = 0; // <-- whoops!

|

||||

}

|

||||

assert_eq!(

|

||||

acc.log_transaction(tr.clone()),

|

||||

Err(AccountingError::InvalidTransfer)

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_transfer_to_newb() {

|

||||

let alice = Mint::new(10_000);

|

||||

let mut acc = Accountant::new(&alice, Some(2));

|

||||

let alice_keypair = alice.keypair();

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

acc.transfer(500, &alice_keypair, bob_pubkey, alice.seed())

|

||||

.unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap(), 500);

|

||||

|

||||

drop(acc.historian.sender);

|

||||

assert_eq!(

|

||||

acc.historian.thread_hdl.join().unwrap(),

|

||||

ExitReason::RecvDisconnected

|

||||

);

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_transfer_on_date() {

|

||||

let alice = Mint::new(1);

|

||||

let mut acc = Accountant::new(&alice, Some(2));

|

||||

let alice_keypair = alice.keypair();

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let dt = Utc::now();

|

||||

acc.transfer_on_date(1, &alice_keypair, bob_pubkey, dt, alice.seed())

|

||||

.unwrap();

|

||||

|

||||

// Alice's balance will be zero because all funds are locked up.

|

||||

assert_eq!(acc.get_balance(&alice.pubkey()), Some(0));

|

||||

|

||||

// Bob's balance will be None because the funds have not been

|

||||

// sent.

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), None);

|

||||

|

||||

// Now, acknowledge the time in the condition occurred and

|

||||

// that bob's funds are now available.

|

||||

acc.process_verified_timestamp(alice.pubkey(), dt).unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), Some(1));

|

||||

|

||||

acc.process_verified_timestamp(alice.pubkey(), dt).unwrap(); // <-- Attack! Attempt to process completed transaction.

|

||||

assert_ne!(acc.get_balance(&bob_pubkey), Some(2));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_transfer_after_date() {

|

||||

let alice = Mint::new(1);

|

||||

let mut acc = Accountant::new(&alice, Some(2));

|

||||

let alice_keypair = alice.keypair();

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let dt = Utc::now();

|

||||

acc.process_verified_timestamp(alice.pubkey(), dt).unwrap();

|

||||

|

||||

// It's now past now, so this transfer should be processed immediately.

|

||||

acc.transfer_on_date(1, &alice_keypair, bob_pubkey, dt, alice.seed())

|

||||

.unwrap();

|

||||

|

||||

assert_eq!(acc.get_balance(&alice.pubkey()), Some(0));

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), Some(1));

|

||||

}

|

||||

|

||||

#[test]

|

||||

fn test_cancel_transfer() {

|

||||

let alice = Mint::new(1);

|

||||

let mut acc = Accountant::new(&alice, Some(2));

|

||||

let alice_keypair = alice.keypair();

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let dt = Utc::now();

|

||||

let sig = acc.transfer_on_date(1, &alice_keypair, bob_pubkey, dt, alice.seed())

|

||||

.unwrap();

|

||||

|

||||

// Alice's balance will be zero because all funds are locked up.

|

||||

assert_eq!(acc.get_balance(&alice.pubkey()), Some(0));

|

||||

|

||||

// Bob's balance will be None because the funds have not been

|

||||

// sent.

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), None);

|

||||

|

||||

// Now, cancel the trancaction. Alice gets her funds back, Bob never sees them.

|

||||

acc.process_verified_sig(alice.pubkey(), sig).unwrap();

|

||||

assert_eq!(acc.get_balance(&alice.pubkey()), Some(1));

|

||||

assert_eq!(acc.get_balance(&bob_pubkey), None);

|

||||

|

||||

acc.process_verified_sig(alice.pubkey(), sig).unwrap(); // <-- Attack! Attempt to cancel completed transaction.

|

||||

assert_ne!(acc.get_balance(&alice.pubkey()), Some(2));

|

||||

}

|

||||

}

|

||||

189

src/accountant_skel.rs

Normal file

189

src/accountant_skel.rs

Normal file

@ -0,0 +1,189 @@

|

||||

//! The `accountant_skel` module is a microservice that exposes the high-level

|

||||

//! Accountant API to the network. Its message encoding is currently

|

||||

//! in flux. Clients should use AccountantStub to interact with it.

|

||||

|

||||

use accountant::Accountant;

|

||||

use bincode::{deserialize, serialize};

|

||||

use entry::Entry;

|

||||

use hash::Hash;

|

||||

use result::Result;

|

||||

use serde_json;

|

||||

use signature::PublicKey;

|

||||

use std::default::Default;

|

||||

use std::io::Write;

|

||||

use std::net::{SocketAddr, UdpSocket};

|

||||

use std::sync::atomic::{AtomicBool, Ordering};

|

||||

use std::sync::mpsc::channel;

|

||||

use std::sync::{Arc, Mutex};

|

||||

use std::thread::{spawn, JoinHandle};

|

||||

use std::time::Duration;

|

||||

use streamer;

|

||||

use transaction::Transaction;

|

||||

use rayon::prelude::*;

|

||||

|

||||

pub struct AccountantSkel<W: Write + Send + 'static> {

|

||||

pub acc: Accountant,

|

||||

pub last_id: Hash,

|

||||

writer: W,

|

||||

}

|

||||

|

||||

#[cfg_attr(feature = "cargo-clippy", allow(large_enum_variant))]

|

||||

#[derive(Serialize, Deserialize, Debug)]

|

||||

pub enum Request {

|

||||

Transaction(Transaction),

|

||||

GetBalance { key: PublicKey },

|

||||

GetId { is_last: bool },

|

||||

}

|

||||

|

||||

impl Request {

|

||||

/// Verify the request is valid.

|

||||

pub fn verify(&self) -> bool {

|

||||

match *self {

|

||||

Request::Transaction(ref tr) => tr.verify(),

|

||||

_ => true,

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

/// Parallel verfication of a batch of requests.

|

||||

fn filter_valid_requests(reqs: Vec<(Request, SocketAddr)>) -> Vec<(Request, SocketAddr)> {

|

||||

reqs.into_par_iter().filter({ |x| x.0.verify() }).collect()

|

||||

}

|

||||

|

||||

#[derive(Serialize, Deserialize, Debug)]

|

||||

pub enum Response {

|

||||

Balance { key: PublicKey, val: Option<i64> },

|

||||

Entries { entries: Vec<Entry> },

|

||||

Id { id: Hash, is_last: bool },

|

||||

}

|

||||

|

||||

impl<W: Write + Send + 'static> AccountantSkel<W> {

|

||||

/// Create a new AccountantSkel that wraps the given Accountant.

|

||||

pub fn new(acc: Accountant, w: W) -> Self {

|

||||

let last_id = acc.first_id;

|

||||

AccountantSkel {

|

||||

acc,

|

||||

last_id,

|

||||

writer: w,

|

||||

}

|

||||

}

|

||||

|

||||

/// Process any Entry items that have been published by the Historian.

|

||||

pub fn sync(&mut self) -> Hash {

|

||||

while let Ok(entry) = self.acc.historian.receiver.try_recv() {

|

||||

self.last_id = entry.id;

|

||||

writeln!(self.writer, "{}", serde_json::to_string(&entry).unwrap()).unwrap();

|

||||

}

|

||||

self.last_id

|

||||

}

|

||||

|

||||

/// Process Request items sent by clients.

|

||||

pub fn log_verified_request(&mut self, msg: Request) -> Option<Response> {

|

||||

match msg {

|

||||

Request::Transaction(tr) => {

|

||||

if let Err(err) = self.acc.log_verified_transaction(tr) {

|

||||

eprintln!("Transaction error: {:?}", err);

|

||||

}

|

||||

None

|

||||

}

|

||||

Request::GetBalance { key } => {

|

||||

let val = self.acc.get_balance(&key);

|

||||

Some(Response::Balance { key, val })

|

||||

}

|

||||

Request::GetId { is_last } => Some(Response::Id {

|

||||

id: if is_last {

|

||||

self.sync()

|

||||

} else {

|

||||

self.acc.first_id

|

||||

},

|

||||

is_last,

|

||||

}),

|

||||

}

|

||||

}

|

||||

|

||||

fn process(

|

||||

obj: &Arc<Mutex<AccountantSkel<W>>>,

|

||||

r_reader: &streamer::Receiver,

|

||||

s_responder: &streamer::Responder,

|

||||

packet_recycler: &streamer::PacketRecycler,

|

||||

response_recycler: &streamer::ResponseRecycler,

|

||||

) -> Result<()> {

|

||||

let timer = Duration::new(1, 0);

|

||||

let msgs = r_reader.recv_timeout(timer)?;

|

||||

let msgs_ = msgs.clone();

|

||||

let rsps = streamer::allocate(response_recycler);

|

||||

let rsps_ = rsps.clone();

|

||||

{

|

||||

let mut reqs = vec![];

|

||||

for packet in &msgs.read().unwrap().packets {

|

||||

let rsp_addr = packet.meta.get_addr();

|

||||

let sz = packet.meta.size;

|

||||

let req = deserialize(&packet.data[0..sz])?;

|

||||

reqs.push((req, rsp_addr));

|

||||

}

|

||||

let reqs = filter_valid_requests(reqs);

|

||||

|

||||

let mut num = 0;

|

||||

let mut ursps = rsps.write().unwrap();

|

||||

for (req, rsp_addr) in reqs {

|

||||

if let Some(resp) = obj.lock().unwrap().log_verified_request(req) {

|

||||

if ursps.responses.len() <= num {

|

||||

ursps

|

||||

.responses

|

||||

.resize((num + 1) * 2, streamer::Response::default());

|

||||

}

|

||||

let rsp = &mut ursps.responses[num];

|

||||

let v = serialize(&resp)?;

|

||||

let len = v.len();

|

||||

rsp.data[..len].copy_from_slice(&v);

|

||||

rsp.meta.size = len;

|

||||

rsp.meta.set_addr(&rsp_addr);

|

||||

num += 1;

|

||||

}

|

||||

}

|

||||

ursps.responses.resize(num, streamer::Response::default());

|

||||

}

|

||||

s_responder.send(rsps_)?;

|

||||

streamer::recycle(packet_recycler, msgs_);

|

||||

Ok(())

|

||||

}

|

||||

|

||||

/// Create a UDP microservice that forwards messages the given AccountantSkel.

|

||||

/// Set `exit` to shutdown its threads.

|

||||

pub fn serve(

|

||||

obj: Arc<Mutex<AccountantSkel<W>>>,

|

||||

addr: &str,

|

||||

exit: Arc<AtomicBool>,

|

||||

) -> Result<Vec<JoinHandle<()>>> {

|

||||

let read = UdpSocket::bind(addr)?;

|

||||

// make sure we are on the same interface

|

||||

let mut local = read.local_addr()?;

|

||||

local.set_port(0);

|

||||

let write = UdpSocket::bind(local)?;

|

||||

|

||||

let packet_recycler = Arc::new(Mutex::new(Vec::new()));

|

||||

let response_recycler = Arc::new(Mutex::new(Vec::new()));

|

||||

let (s_reader, r_reader) = channel();

|

||||

let t_receiver = streamer::receiver(read, exit.clone(), packet_recycler.clone(), s_reader)?;

|

||||

|

||||

let (s_responder, r_responder) = channel();

|

||||

let t_responder =

|

||||

streamer::responder(write, exit.clone(), response_recycler.clone(), r_responder);

|

||||

|

||||

let skel = obj.clone();

|

||||

let t_server = spawn(move || loop {

|

||||

let e = AccountantSkel::process(

|

||||

&skel,

|

||||

&r_reader,

|

||||

&s_responder,

|

||||

&packet_recycler,

|

||||

&response_recycler,

|

||||

);

|

||||

if e.is_err() && exit.load(Ordering::Relaxed) {

|

||||

break;

|

||||

}

|

||||

});

|

||||

|

||||

Ok(vec![t_receiver, t_responder, t_server])

|

||||

}

|

||||

}

|

||||

126

src/accountant_stub.rs

Normal file

126

src/accountant_stub.rs

Normal file

@ -0,0 +1,126 @@

|

||||

//! The `accountant_stub` module is a client-side object that interfaces with a server-side Accountant

|

||||

//! object via the network interface exposed by AccountantSkel. Client code should use

|

||||

//! this object instead of writing messages to the network directly. The binary

|

||||

//! encoding of its messages are unstable and may change in future releases.

|

||||

|

||||

use accountant_skel::{Request, Response};

|

||||

use bincode::{deserialize, serialize};

|

||||

use hash::Hash;

|

||||

use signature::{KeyPair, PublicKey, Signature};

|

||||

use std::io;

|

||||

use std::net::UdpSocket;

|

||||

use transaction::Transaction;

|

||||

|

||||

pub struct AccountantStub {

|

||||

pub addr: String,

|

||||

pub socket: UdpSocket,

|

||||

}

|

||||

|

||||

impl AccountantStub {

|

||||

/// Create a new AccountantStub that will interface with AccountantSkel

|

||||

/// over `socket`. To receive responses, the caller must bind `socket`

|

||||

/// to a public address before invoking AccountantStub methods.

|

||||

pub fn new(addr: &str, socket: UdpSocket) -> Self {

|

||||

AccountantStub {

|

||||

addr: addr.to_string(),

|

||||

socket,

|

||||

}

|

||||

}

|

||||

|

||||

/// Send a signed Transaction to the server for processing. This method

|

||||

/// does not wait for a response.

|

||||

pub fn transfer_signed(&self, tr: Transaction) -> io::Result<usize> {

|

||||

let req = Request::Transaction(tr);

|

||||

let data = serialize(&req).unwrap();

|

||||

self.socket.send_to(&data, &self.addr)

|

||||

}

|

||||

|

||||

/// Creates, signs, and processes a Transaction. Useful for writing unit-tests.

|

||||

pub fn transfer(

|

||||

&self,

|

||||

n: i64,

|

||||

keypair: &KeyPair,

|

||||

to: PublicKey,

|

||||

last_id: &Hash,

|

||||

) -> io::Result<Signature> {

|

||||

let tr = Transaction::new(keypair, to, n, *last_id);

|

||||

let sig = tr.sig;

|

||||

self.transfer_signed(tr).map(|_| sig)

|

||||

}

|

||||

|

||||

/// Request the balance of the user holding `pubkey`. This method blocks

|

||||

/// until the server sends a response. If the response packet is dropped

|

||||

/// by the network, this method will hang indefinitely.

|

||||

pub fn get_balance(&self, pubkey: &PublicKey) -> io::Result<Option<i64>> {

|

||||

let req = Request::GetBalance { key: *pubkey };

|

||||

let data = serialize(&req).expect("serialize GetBalance");

|

||||

self.socket.send_to(&data, &self.addr)?;

|

||||

let mut buf = vec![0u8; 1024];

|

||||

self.socket.recv_from(&mut buf)?;

|

||||

let resp = deserialize(&buf).expect("deserialize balance");

|

||||

if let Response::Balance { key, val } = resp {

|

||||

assert_eq!(key, *pubkey);

|

||||

return Ok(val);

|

||||

}

|

||||

Ok(None)

|

||||

}

|

||||

|

||||

/// Request the first or last Entry ID from the server.

|

||||

fn get_id(&self, is_last: bool) -> io::Result<Hash> {

|

||||

let req = Request::GetId { is_last };

|

||||

let data = serialize(&req).expect("serialize GetId");

|

||||

self.socket.send_to(&data, &self.addr)?;

|

||||

let mut buf = vec![0u8; 1024];

|

||||

self.socket.recv_from(&mut buf)?;

|

||||

let resp = deserialize(&buf).expect("deserialize Id");

|

||||

if let Response::Id { id, .. } = resp {

|

||||

return Ok(id);

|

||||

}

|

||||

Ok(Default::default())

|

||||

}

|

||||

|

||||

/// Request the last Entry ID from the server. This method blocks

|

||||

/// until the server sends a response. At the time of this writing,

|

||||

/// it also has the side-effect of causing the server to log any

|

||||

/// entries that have been published by the Historian.

|

||||

pub fn get_last_id(&self) -> io::Result<Hash> {

|

||||

self.get_id(true)

|

||||

}

|

||||

}

|

||||

|

||||

#[cfg(test)]

|

||||

mod tests {

|

||||

use super::*;

|

||||

use accountant::Accountant;

|

||||

use accountant_skel::AccountantSkel;

|

||||

use mint::Mint;

|

||||

use signature::{KeyPair, KeyPairUtil};

|

||||

use std::io::sink;

|

||||

use std::sync::atomic::{AtomicBool, Ordering};

|

||||

use std::sync::{Arc, Mutex};

|

||||

use std::thread::sleep;

|

||||

use std::time::Duration;

|

||||

|

||||

// TODO: Figure out why this test sometimes hangs on TravisCI.

|

||||

#[test]

|

||||

fn test_accountant_stub() {

|

||||

let addr = "127.0.0.1:9000";

|

||||

let send_addr = "127.0.0.1:9001";

|

||||

let alice = Mint::new(10_000);

|

||||

let acc = Accountant::new(&alice, Some(30));

|

||||

let bob_pubkey = KeyPair::new().pubkey();

|

||||

let exit = Arc::new(AtomicBool::new(false));

|

||||

let acc = Arc::new(Mutex::new(AccountantSkel::new(acc, sink())));

|

||||

let _threads = AccountantSkel::serve(acc, addr, exit.clone()).unwrap();

|

||||

sleep(Duration::from_millis(300));

|

||||

|

||||

let socket = UdpSocket::bind(send_addr).unwrap();

|

||||

|

||||

let acc = AccountantStub::new(addr, socket);

|

||||

let last_id = acc.get_last_id().unwrap();

|

||||

let _sig = acc.transfer(500, &alice.keypair(), bob_pubkey, &last_id)

|

||||

.unwrap();

|

||||

assert_eq!(acc.get_balance(&bob_pubkey).unwrap().unwrap(), 500);

|

||||

exit.store(true, Ordering::Relaxed);

|

||||

}

|

||||

}

|

||||

73

src/bin/client-demo.rs

Normal file

73

src/bin/client-demo.rs

Normal file

@ -0,0 +1,73 @@

|

||||

extern crate rayon;

|

||||

extern crate serde_json;

|

||||

extern crate solana;

|

||||

|

||||

use solana::accountant_stub::AccountantStub;

|

||||

use solana::mint::Mint;

|

||||

use solana::signature::{KeyPair, KeyPairUtil};

|

||||

use solana::transaction::Transaction;

|

||||

use std::io::stdin;

|

||||

use std::net::UdpSocket;

|

||||

use std::time::{Duration, Instant};

|

||||

use std::thread::sleep;

|

||||

use rayon::prelude::*;

|

||||

|

||||

fn main() {

|

||||

let addr = "127.0.0.1:8000";

|

||||

let send_addr = "127.0.0.1:8001";

|

||||

|

||||

let mint: Mint = serde_json::from_reader(stdin()).unwrap();

|

||||

let mint_keypair = mint.keypair();

|

||||

let mint_pubkey = mint.pubkey();

|

||||

|

||||

let socket = UdpSocket::bind(send_addr).unwrap();

|

||||

let acc = AccountantStub::new(addr, socket);

|

||||

let last_id = acc.get_last_id().unwrap();

|

||||

|

||||

let mint_balance = acc.get_balance(&mint_pubkey).unwrap().unwrap();

|

||||

println!("Mint's Initial Balance {}", mint_balance);

|

||||

|

||||

println!("Signing transactions...");

|

||||

let txs = 100_000;

|

||||

let now = Instant::now();

|

||||

let transactions: Vec<_> = (0..txs)

|

||||

.into_par_iter()

|

||||

.map(|_| {

|

||||

let rando_pubkey = KeyPair::new().pubkey();

|

||||

Transaction::new(&mint_keypair, rando_pubkey, 1, last_id)

|

||||

})

|

||||

.collect();

|

||||

let duration = now.elapsed();

|

||||

let ns = duration.as_secs() * 1_000_000_000 + u64::from(duration.subsec_nanos());

|

||||

let bsps = txs as f64 / ns as f64;

|

||||

let nsps = ns as f64 / txs as f64;

|

||||

println!(

|

||||

"Done. {} thousand signatures per second, {}us per signature",

|

||||

bsps * 1_000_000_f64,

|

||||

nsps / 1_000_f64

|

||||

);

|

||||

|

||||

println!("Transferring 1 unit {} times...", txs);

|

||||

let now = Instant::now();

|

||||

let mut _sig = Default::default();

|

||||

for tr in transactions {

|

||||

_sig = tr.sig;

|

||||

acc.transfer_signed(tr).unwrap();

|

||||

}

|

||||

println!("Waiting for last transaction to be confirmed...",);

|

||||

let mut val = mint_balance;

|

||||

let mut prev = 0;

|

||||

while val != prev {

|

||||

sleep(Duration::from_millis(20));

|

||||

prev = val;

|

||||

val = acc.get_balance(&mint_pubkey).unwrap().unwrap();

|

||||

}

|

||||

println!("Mint's Final Balance {}", val);

|

||||

let txs = mint_balance - val;

|

||||

println!("Successful transactions {}", txs);

|

||||

|

||||

let duration = now.elapsed();

|

||||

let ns = duration.as_secs() * 1_000_000_000 + u64::from(duration.subsec_nanos());

|

||||

let tps = (txs * 1_000_000_000) as f64 / ns as f64;

|

||||

println!("Done. {} tps!", tps);

|

||||

}

|

||||

@ -1,27 +0,0 @@

|

||||

extern crate silk;

|

||||

|

||||

use silk::historian::Historian;

|

||||

use silk::log::{verify_slice, Entry, Event, Sha256Hash};

|

||||

use std::{thread, time};

|

||||

use std::sync::mpsc::SendError;

|

||||

|

||||

fn create_log(hist: &Historian) -> Result<(), SendError<Event>> {

|

||||

hist.sender.send(Event::Tick)?;

|

||||

thread::sleep(time::Duration::new(0, 100_000));

|

||||

hist.sender.send(Event::UserDataKey(0xdeadbeef))?;

|

||||

thread::sleep(time::Duration::new(0, 100_000));

|

||||

hist.sender.send(Event::Tick)?;

|

||||

Ok(())

|

||||

}

|

||||

|

||||

fn main() {

|

||||

let seed = Sha256Hash::default();

|

||||

let hist = Historian::new(&seed);

|

||||

create_log(&hist).expect("send error");

|

||||

drop(hist.sender);

|

||||

let entries: Vec<Entry> = hist.receiver.iter().collect();

|

||||

for entry in &entries {

|

||||

println!("{:?}", entry);

|

||||

}

|

||||

assert!(verify_slice(&entries, &seed));

|

||||

}

|

||||

30

src/bin/genesis-demo.rs

Normal file

30

src/bin/genesis-demo.rs

Normal file

@ -0,0 +1,30 @@

|

||||

extern crate serde_json;

|

||||

extern crate solana;

|

||||

|

||||

use solana::entry::create_entry;

|

||||

use solana::event::Event;

|

||||

use solana::hash::Hash;

|

||||

use solana::mint::Mint;

|

||||

use solana::signature::{KeyPair, KeyPairUtil, PublicKey};

|

||||

use solana::transaction::Transaction;

|

||||

use std::io::stdin;

|

||||

|

||||

fn transfer(from: &KeyPair, (to, tokens): (PublicKey, i64), last_id: Hash) -> Event {

|

||||

Event::Transaction(Transaction::new(from, to, tokens, last_id))

|

||||

}

|

||||

|

||||

fn main() {

|

||||

let mint: Mint = serde_json::from_reader(stdin()).unwrap();

|

||||

let mut entries = mint.create_entries();

|

||||

|

||||

let from = mint.keypair();

|

||||

let seed = mint.seed();

|

||||

let alice = (KeyPair::new().pubkey(), 200);

|

||||

let bob = (KeyPair::new().pubkey(), 100);

|

||||

let events = vec![transfer(&from, alice, seed), transfer(&from, bob, seed)];

|

||||

entries.push(create_entry(&seed, 0, events));

|

||||

|

||||

for entry in entries {

|

||||

println!("{}", serde_json::to_string(&entry).unwrap());

|

||||

}

|

||||

}

|

||||

14

src/bin/genesis.rs

Normal file

14

src/bin/genesis.rs

Normal file

@ -0,0 +1,14 @@

|

||||

//! A command-line executable for generating the chain's genesis block.

|

||||

|

||||

extern crate serde_json;

|

||||

extern crate solana;

|

||||

|

||||

use solana::mint::Mint;

|

||||

use std::io::stdin;

|

||||

|

||||

fn main() {

|

||||

let mint: Mint = serde_json::from_reader(stdin()).unwrap();

|

||||

for x in mint.create_entries() {

|

||||

println!("{}", serde_json::to_string(&x).unwrap());

|

||||

}

|

||||

}

|

||||

37

src/bin/historian-demo.rs

Normal file

37

src/bin/historian-demo.rs

Normal file

@ -0,0 +1,37 @@

|

||||

extern crate solana;

|

||||

|

||||

use solana::entry::Entry;

|

||||

use solana::event::Event;

|

||||

use solana::hash::Hash;

|

||||

use solana::historian::Historian;

|

||||

use solana::ledger::verify_slice;

|

||||

use solana::recorder::Signal;

|

||||

use solana::signature::{KeyPair, KeyPairUtil};

|

||||

use solana::transaction::Transaction;

|

||||

use std::sync::mpsc::SendError;

|

||||

use std::thread::sleep;

|

||||

use std::time::Duration;

|

||||

|

||||

fn create_ledger(hist: &Historian, seed: &Hash) -> Result<(), SendError<Signal>> {

|

||||

sleep(Duration::from_millis(15));

|

||||

let keypair = KeyPair::new();

|

||||

let tr = Transaction::new(&keypair, keypair.pubkey(), 42, *seed);

|

||||

let signal0 = Signal::Event(Event::Transaction(tr));

|

||||

hist.sender.send(signal0)?;

|

||||

sleep(Duration::from_millis(10));

|

||||

Ok(())

|

||||

}

|

||||

|

||||

fn main() {

|

||||

let seed = Hash::default();

|

||||

let hist = Historian::new(&seed, Some(10));

|

||||

create_ledger(&hist, &seed).expect("send error");

|

||||

drop(hist.sender);

|

||||

let entries: Vec<Entry> = hist.receiver.iter().collect();

|

||||

for entry in &entries {

|

||||

println!("{:?}", entry);

|

||||

}

|

||||

// Proof-of-History: Verify the historian learned about the events

|

||||

// in the same order they appear in the vector.

|

||||

assert!(verify_slice(&entries, &seed));

|

||||

}

|

||||

15

src/bin/mint.rs

Normal file

15

src/bin/mint.rs

Normal file

@ -0,0 +1,15 @@

|

||||

extern crate serde_json;

|

||||

extern crate solana;

|

||||

|

||||

use solana::mint::Mint;

|

||||

use std::io;

|

||||

|

||||

fn main() {

|

||||

let mut input_text = String::new();

|

||||

io::stdin().read_line(&mut input_text).unwrap();

|

||||

let trimmed = input_text.trim();

|

||||

let tokens = trimmed.parse::<i64>().unwrap();

|

||||

|

||||

let mint = Mint::new(tokens);

|

||||

println!("{}", serde_json::to_string(&mint).unwrap());

|

||||

}

|

||||

25

src/bin/testnode.rs

Normal file

25

src/bin/testnode.rs

Normal file

@ -0,0 +1,25 @@

|

||||

extern crate serde_json;

|

||||

extern crate solana;

|

||||

|

||||

use solana::accountant::Accountant;

|

||||

use solana::accountant_skel::AccountantSkel;

|

||||

use std::io::{self, stdout, BufRead};

|

||||

use std::sync::atomic::AtomicBool;

|

||||

use std::sync::{Arc, Mutex};

|

||||

|

||||

fn main() {

|

||||

let addr = "127.0.0.1:8000";

|

||||

let stdin = io::stdin();

|

||||

let entries = stdin

|

||||

.lock()

|

||||

.lines()

|

||||

.map(|line| serde_json::from_str(&line.unwrap()).unwrap());

|

||||

let acc = Accountant::new_from_entries(entries, Some(1000));

|

||||

let exit = Arc::new(AtomicBool::new(false));

|

||||

let skel = Arc::new(Mutex::new(AccountantSkel::new(acc, stdout())));

|

||||

eprintln!("Listening on {}", addr);

|

||||

let threads = AccountantSkel::serve(skel, addr, exit.clone()).unwrap();

|

||||

for t in threads {

|

||||

t.join().expect("join");

|

||||

}

|

||||

}

|

||||

140

src/entry.rs

Normal file